Designing assessment for academic integrity

Evidence-based recommendations for designing teaching, student support and assessment in the era of digital assessment and artificial intelligence with the aim of developing good academic practice.

4 January 2023

This toolkit focuses on designing assessments that encourage integrity and honesty to reduce potential academic misconduct, particularly in digital assessments, including:

- Misconduct

- Collusion

- Plagiarism

Recent advances in artificial intelligence (AI) tools for writing, coding, sound and image production present challenges and new opportunities for the way we design our assessments.

The toolkit is aimed at those designing or adapting assessments, ranging from individual assessment activities within a module to overarching assessment strategies for entire programmes of study. It gives a few key principles with practical actions for implementation.

It aims to provide strategies to mitigate academic misconduct in assessment through two basic principles:

- Good practice in designing assessments

- Educating and signposting good practice to students

The International Center for Academic Integrity defines Academic Integrity as a commitment, even in the face of adversity, to six fundamental values: honesty, trust, fairness, respect, responsibility, and courage. Assuring the integrity of assessment at UCL is key to maintaining academic standards, ensuring we only graduate students who have met the learning outcomes of their programme.

Assuring the integrity of assessment at UCL is key to maintaining academic standards in both invigilated face-to-face exams to non-invigilated controlled conditions or using digital assessment platforms such as AssessmentUCL, Moodle and Crowdmark.

Assessment design is key to academic integrity

Effective detection of cheating can play a key role and can act as a deterrent. However, this toolkit focuses on the role that assessment design can play and how we can educate students throughout their study about good academic integrity practice.

It may not be possible to design out misconduct completely, but good assessment design from the start can reduce it significantly and is something within our control.

Your understanding of academic misconduct may be informed by the idea that:

- Students have a clear and accurate understanding of what it means to act in academically dishonest ways

- Greater vigilance achieved though in-person paper based, invigilated exams reduces or prevents cheating.

Recent research challenges both these assumptions (Egan, 2018; Harper (2021). No single method will stop academic misconduct, but using a series of different, layered, methods will reduce its likelihood.

Methods of prevention

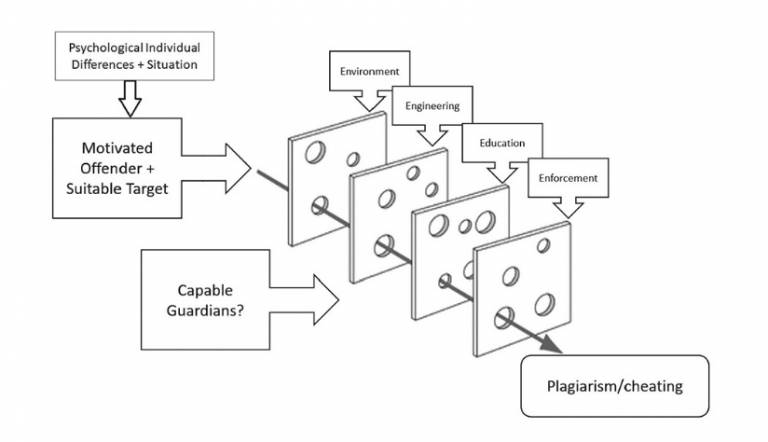

This ‘Swiss cheese’ approach, which has often been used to describe accident or infection reduction, is advocated by Rundle et al (2020). It indicates that multiple methods of prevention, as shown in the diagram below, can reduce plagiarism, cheating and misconduct. Methods include:

- Environment: creating a learning environment where students see examples of good practice and the culture promotes good conduct. This might also include honour codes and learning contracts that give clarity to expectations.

- Engineering: designing good assessments that reduce misconduct.

- Education: ensuring students understand what constitutes misconduct

- Enforcement: using tools such as Turnitin and other methods to identify episodes of misconduct.

Image from Rundle et al 2020, A modified routine activity theory model of the causes of, and barriers preventing, plagiarism and cheating.

Principles for assessment design

The following set of principles for assessment design and education provide further details of actions you can take and provide links to resources and case students.

They are divided into two sections:

- 1. Designing and setting assessments

Principle Methods of prevention Promote academic integrity through assessment briefing In briefings, such as discussions and seminars, encourage students to interpret what ethical values look like in personal or professional settings, and use assessment methods such as self-reflective tasks that bring in personal insights and ethical problem-solving scenarios. Use clear marking criteria and rubrics to reward positive behaviours associated with academic integrity.

- Get feedback from your colleagues on your rubrics when you design them: find out whether what they understand is what you meant!

- Use your rubric as class tutorial material – have a discussion with your students about what is important in an assignment, including academic integrity.

- Consider designing in a separate reflection component/task with allocated marks in the rubric. You could involve students in the design of this.

Examples

- [Case study] Michelle de Hann discusses how she designs and uses rubrics in the Institute of Child Health.

Resources

Design different types of assessments that motivate and challenge students to do the work themselves (or in assigned groups/pairs).- Include opportunities to allow students to demonstrate their creativity, problem-solving and reflection competencies e.g., project or problem-based assessments. These might extend to highly personal creative works which students write about or discuss in small groups or individual assessments.

- Create assessments that require synthesis and critical analysis of data and sources rather than recall and displays of knowledge of facts. For example, apply Bloom's Taxonomy and use higher level assessment activities (create, evaluate, or apply) to discourage a standard answer.

- You might incorporate tools such as AI ChatBots but design assessment that requires students to demonstrate a deep understanding of their subject by critiquing, comparing and refining the AI outputs.

- Encourage students to 'show their working,' for example by sharing notes and drafts alongside essays or at early stages of working on the assessment when you can offer formative feedback.

- Consider incorporating some oral assessments.

Examples

- [Case study] Prof Sally Brown has gathered many examples of alternative assessments from across the sector

Ensure assessments are authentic, current, and relevant- Work-, community- based or UCL Grand Challenges-based assessments provide meaningful and individual interpretations, opportunities to create portfolios for future job applications, and can reduce opportunities for academic misconduct. UCL Centre for Engineering Education has developed its own understanding of what ‘authentic’ means for students.

- Consider assessments that build while the students are learning, for example through regular blog or forum posts with reflections on individual learning.

Examples

- [Case study] Rochelle Burgess discusses how she uses longitudinal assessment throughout her module

- [Case study] Jane Simmonds uses reflective self-assessment for her students

- [Case study] Gemma More discusses a collaboration with the Greater London Authority that generated cross disciplinary working and authentic assessment

- [Case study] Anson Mackay discusses how he created assessment based on blogs for Year 3 modules.

To reduce collusion, consider assessment briefs that have open-ended solutions or more than one solution- Set open briefs where students can come up with multiple solutions, with justification for why they have chosen the one they have.

- Personalise assessments with online tools that can randomise questions or create individualised datasets for numerical-based work. To test the questions, you could experiment by entering them into AI writing tools to see what kind of answers its produces.

Resources

- AssessmentUCL Math Question Generator allows you to create different numerical questions based on parameters and generate up to 50 possible variants.

- Moodle Quiz allows you to randomise both questions and variables to create unique assessments for each student

- Moodle STACK quiz question type allows to deploy variants of mathematical questions.

- Dynamic data in AssessmentUCL allows you to mix up multiple choice answers and create a bank of questions with simple changing variables.

- 2. Designing across a programme

Principle Methods of prevention Adopt a scaffolded assessment strategy across a whole programme - Design formative (non credit-bearing) assessment that has clear links to summative assessment. Where possible, foster connections with real world contexts to promote values of honestly, fairness, trust, and ethical thinking. Encourage students to take risks and develop self reflection in formative tasks.

- An unbalanced assessment load can add pressure to students, exacerbating the likelihood of them resorting to academic misconduct to complete all assessments in time. Think about how you can time assessments to avoid bunching. Consider how much assessment is required across an entire programme - this might be less than the total of all module assessments.

- Students are more inclined to engage well with assessments where they can see future value, so think about how assessments link across a programme, and signpost these opportunities for development of personal skills and knowledge to students.

Resources

- Use the UCL Chart tool to map your assessments across a programme.

- UCL Arena offer sessions on Designing assessment across a programme, bookable through the events feed

- Programme Design workshops facilitate discussions across entire programme teams, and these can be focussed on assessment https://www.ucl.ac.uk/teaching-learning/teaching-resources/designing-pro...

- ABC workshops enable teams to review and design modules.

Design a scaffolded and integrated feedback strategy across modules or programme - Communicating and giving formative feedback at the right time throughout the assessment process supports and scaffolds assessment for learning by offering students opportunities along the way for self, peer or tutor feedback e.g. WIP (Work in Progress) or Crits.

- Look at throughlines of similar assessments throughout a programme of study, and be sure to highlight these and how each feeds into the next, to your students.

- Facilitate students’ use of feedback in shaping their future assignments through discussions in class or using digital coversheets for assessment submission that remind them to engage with past feedback.

- Provide actionable and concise feedback to students.

Examples

- UCL Population Health have developed faculty-wide feedback guidance that gives checklists for module and programme leads to consider.

- [Case study] Jane Simmonds has written about how she encourages students to reflect on their feedback in class.

Resources

- Video on giving efficient feedback

- Guidance on using proformas for feedback, and links to some examples

- 7 short tips for enhancing feedback to students.

Signpost good practice at all opportunities to students - Set expectations with students from the beginning – don’t assume that they have the same understanding as you and UCL of what misconduct is. Start discussing the use of AI writing tools with your students as part of wider conversations around academic integrity and how their engagement with assessment throughout the programme supports their own learning and development. The basic tenets of academic integrity apply to AI.

- Praise good behaviours, and explain the penalties associated with poor academic integrity. Encourage students to ask questions if they are unsure of practices.

- Ensure students see good academic practice in their teaching, for instance ensuring that material is always correctly referenced.

Resources

- Student guidance on academic integrity at UCL.

Further help

The resource aligns with the ‘Designing Open book exams’ Teaching Toolkit.

- Glossary

Academic integrity: includes all the principal behaviours and approaches relating to fairness and honesty within teaching, learning and assessment. Assessment practices that are transparent and promote honest and responsible student engagement also support academic standards and contribute to the quality of the student experience.

Academic misconduct: is defined as any action or attempted action that provides an unfair academic advantage. This includes plagiarism, collusion, falsification, contract cheating, unauthorised communication, collaboration, use of books, file sharing or technology.

Artificial Intelligence: A new generation of writing tools, such as OpenAI’s ChatGTP, GTP3 and Deepmind’s AlphaCode have recently entered the education space. These cannot be detected by tools, such as Turnitin. Whilst these have a wow factor, they have weaknesses that can be exploited in assessment.

Examples include:

- Reports on independent research activities

- Progressive/reflective portfolio-style assignments that are built up over time

- Application of theories to case studies

- Interactive oral assessments

- Assessments which progressively build on each other, and;

- Synoptic assessment.

Assessment Criteria: consist of the knowledge, understanding, competencies and skills that markers expect a student to display in an assessment task, and which are taken into account in marking the work. These criteria are guided by the learning outcomes of the module and programme

Formative assessment: comprises an ungraded assessment task but can be an assessment component. It is essential in helping students successfully embed learning as they work towards summative assessment and results in clear ‘actionable’ tutor feedback (whilst not excluding peer- or other kinds of feedback). It can be timed throughout the module or programme to provide motivation and ensure students are clear about how to make progress.

Gateway assessment: provides a substantial and strong link between formative and summative assessment. The formative aspect may be an essential required component of the summative assessment but is ungraded (can we add a link to our (unpublished) paper about this as this contains examples of patterns across disciplines?)

Feedback: is information given to a learner from a single or multiple sources to reduce the gap between current performance and the desired goal. The primary purpose of feedback is to help learners adjust their thinking and behaviours to produce improved learning outcomes

Summative assessment: is used to indicate the extent of a learner's success in meeting the assessment criteria used to gauge the intended learning outcomes of a module or programme. It may take the form of examinations, vivas, written assignments, quizzes, reports, recitals, tests, or other evaluations. It contributes to the final outcome of a student’s degree.

Authentic assessment: An assessment that is reflective of reality or the world in which the student will have to apply their learning. It is fit for purpose in that it measures what it purports to measure i.e., the learning outcome(s).

Co-design: Refers to any aspect of the assessment that involves students contributing to the design process. For example, students might be asked to engage in the actual design, draft sample exam questions or briefs or help with developing the assessment rubric.

E-portfolios: An electronic portfolio allows students to document and show their progress and process in an assessment. In addition, when used as a formative tool, it facilitates scaffolding student development through peer and tutor feedback on the development of the same.

Formative feedback: Tutor or peer feedback designed to inform and advance the students summative (final) piece of work. It is considered best practice to afford students an opportunity to improve their work before submitting it for the final summative grade.

Programme assessment strategy: A well thought out, structured and integrated assessment plan that links and relates assessment tasks across a programme and stage (year).

Rubric: A detailed breakdown of marks being assigned to criteria in relation to the assessment.

- Click to view references and further reading

Egan, A., (2018). Improving Academic Integrity through Assessment Design. Dublin City University, National Institute for Digital Learning (NIDL)

Harper, R., Bretag, T., & Rundle, K (2021) Detecting contract cheating: examining the role of assessment type, Higher Education Research & Development, 40:2, 263-278, DOI: 10.1080/07294360.2020.1724899

Rundle, K., Curtis, G., Clare, J. (2020) Why students choose not to cheat. In T. Bretag (Ed.), A Research Agenda for Academic Integrity, Edward Elgar Publishing.

Webb, M (2022) AI and assessment webinar report and reflections. Jisc. Online

Further reading

Dawson, P (2020) Defending Assessment Security in a Digital World

Preventing E-Cheating and Supporting Academic Integrity in Higher Education

Dawson, Phillip and Sutherland-Smith, Wendy 2019, Can training improve marker accuracy at detecting contract cheating?: A multi-disciplinary pre-post study, Assessment & evaluation in higher education, vol. 44, no. 5, pp. 715-725.

Dawson, Phillip and Sutherland-Smith, Wendy 2019, Can training improve marker accuracy at detecting contract cheating?: A multi-disciplinary pre-post study, Assessment & evaluation in higher education, vol. 44, no. 5, pp. 715-725

QAA Assessment in Digital and Blended Pedagogy. (2022)

This guide has been produced by UCL Arena Centre for Research-based Education, with thanks to Phil Dawson. You are welcome to use this guide if you are from another educational facility, but you must credit the project.

Close

Close