Nature inspired methods for optimization

A Julia primer for process engineering

| Author | Eric S Fraga (email) |

| DOI | 10.5281/zenodo.7016482 |

| Latest news | First edition published |

| Git revision | 2022-11-09 1a40907 |

An ebook (PDF) version of this book is available from leanpub

©

2022

Eric S Fraga, all rights reserved.

Sargent Centre for Process Systems Engineering

Department of Chemical Engineering

University College London (UCL)

Dedicated to Jennifer and Sebastian, with love.

- DOI

- 10.5281/zenodo.7016482

- First edition

- 2022-08-19

- Current version

- 2022-11-09 1a40907

Nature inspired methods for optimization have been available for many years. They are attractive because of their inspiration and because they are comparatively easy to implement. This book aims to illustrate this, using a relatively new language, Julia. Julia is a language designed for efficiency. Quoting from the developers of this language:

Julia combines expertise from the diverse fields of computer science and computational science to create a new approach to numerical computing. [Bezanson et al. 2017]

The case studies, from industrial engineering with a focus on process engineering, have been fully implemented within the book, bar one example which uses external codes. All the code is available for readers to try and adapt for their particular applications.

This book does not present state of the art research outcomes. It is primarily intended to demonstrate that simple optimization methods are able to solve complex problems in engineering. As such, the intended audience will include students at the Masters or Doctoral level in a wide range of research areas, not just engineering, and researchers or industrial practitioners wishing to learn more about Julia and nature inspired methods.

Table of Contents

1. Introduction

1.1. Optimization

The problems considered in this book will all be formulated as the minimization of the desired objectives. There may be one or multiple objectives. In either case, the general formulation is

\begin{align} \label{org5094869} \min_{d \in \mathcal{D}} z &= f(d) \\ g(d) & \le 0 \nonumber \\ h(d) & = 0 \nonumber \end{align}where \(d\) are the decision (or design) variables and \(\mathcal{D}\) is the search domain for these variables. \(\mathcal{D}\) might be, for instance, defined by a hyper-box in \(n\) dimensional space, \[ \mathcal{D} \equiv \left [ a, b \right ]^n \subset \mathbb{R}^n \] when only continuous real-valued variables are considered. More generally, the problem may include integer decision variables. Further, the constraints, \(g(d)\) and \(h(d)\), will constrain the feasible points within that domain.

1.2. Process systems engineering

The focus of this book is on solving problems that arise in process systems engineering (PSE). Optimization plays a crucial part in many PSE activities, including process design and operation. The problems that arise may have one or more of these challenges, in no particular order:

- nonlinear models

- multi-modal objective function

- nonsmooth and discontinuous

- distributed quantities

- differential equations

- small feasible regions

- multiple objectives

Each of these aspects, individually, can prove challenging for many optimization methods, especially those based on gradients to direct the search for good solutions. Some problems may have several of these characteristics.

Three chapters will present example design problems which exhibit some of the above aspects:

- purification section for a chlorobenzene production process: discontinuous, non-smooth, small feasible region;

- design of a plug flow reactor: continuous distributed design variable with behaviour described by differential equations;

- heat exchanger network design: combinatorial, non-smooth, complex problem formulation.

These problems may be challenging for gradient based methods and therefore motivate the use of meta-heuristic methods. In this book, I will present meta-heuristic methods inspired by nature.

1.3. Nature inspired optimization

There are many optimization methods and different ways of classifying these methods. One classification used often is deterministic versus stochastic. The former include direct search methods [Kelley 1999] and what are often described as mathematical programming, such as the Simplex method [Dantzig 1982] for linear problems and many different methods for nonlinear problems [Floudas 1995]. The main advantage of deterministic methods is that they will obtain consistent results for the same starting conditions and may provide guarantees on the quality of the solution obtained and/or the performance of the method, such as speed of convergence to an optimal solution. If you are interested in using Julia for mathematical programming, the JuMP package is popular.

Stochastic methods, as the name implies, are based on random behaviour. The main implication is that the results obtained will vary from one attempt to another, subject to the random number generator used. However, the advantage of stochastic methods can be the ability to avoid getting stuck in local optima and potentially find the global optimum or optima for the problem.

Some popular stochastic methods include simulated annealing [Kirkpatrick et al. 1983] and genetic algorithms [Holland 1975] but many others exist [Lara-Montaño et al. 2022]. Simulated annealing, as the name implies, is based on the process of annealing, typically in the context of the controlled cooling of liquid metals to achieve specific properties (hardness, for instance). Genetic algorithms mimic Darwinian evolution, for instance, by using survival of the fittest to propagate good solutions and evolve better solutions. One common aspect of many stochastic methods is that they are inspired by nature.

In this book, we will consider three such nature inspired optimization methods: genetic algorithms, particle swarm optimization [Kennedy and Eberhart 1995], and a plant propagation algorithm [Salhi and Fraga 2011]. These are chosen somewhat arbitrarily as examples of stochastic methods. The selection is in no way intended to indicate that these are the best methods for any particular problem. The aim is to illustrate the potential of these methods, how they may be implemented, and how they may be used to solve problems that arise in process systems engineering.

1.4. Literate programming

This book includes all the code implementing the nature inspired optimization methods and most of the case studies, sufficient to allow readers to attempt the problems themselves.

Programming is at heart a practical art in which real things are built, and a real implementation thus has to exist. [Kay 1993]

The book has been written using literate programming [Knuth 1984]. The motivation for literate programming, to quote Donald Knuth, comes from:

[…] the time is ripe for significantly better documentation of programs, and that we can best achieve this by considering programs to be works of literature. [Knuth 1984]

Therefore, this book may be considered to be the documentation of the code used to implement the methods and to evaluate the problems defined in the case studies. The code presented in the book is automatically exported to the source code files, suitable for immediate invocation.

The technology supporting literate programming in this case is org mode (version 9.5.4). Org mode is a special mode in the Emacs editor (version 29.0.50.0). Org mode supports code blocks which may include programming code in a very wide range of languages. These code blocks are tangled into code files [Schulte and Davison 2011]. In the case of this book, all the code can be found in the author's github repository.

As well as enabling tangling to create the source code files, org mode supports exporting documents to a variety of different targets, including PDF, epub, and HTML. The HTML version of the book is freely available.

Lastly, the literate programming support in org mode not only enables tangling, it also allows for code to be run directly within the Emacs editor while editing the document, with the results of the evaluation inserted into the document [Schulte and Davison 2011]. This book makes full use of this capability to process the results of the optimization problems, including extracting and generating statistical data with awk, grep, and similar tools, and plotting the outcomes with gnuplot.

1.5. The Julia language

Julia is a multi-purpose programming language with features ideally suited for writing generic optimization methods and numerical algorithms in general. To repeat the quote from the initial authors of the language,

Julia combines expertise from the diverse fields of computer science and computational science to create a new approach to numerical computing.[Bezanson et al. 2017]

For the purposes of this book, the key features of Julia are the following:

dynamic typing for high level coding;

multiple dispatch to create generic code that may be easily extended;

functional programming elements for operating on data easily;

integrated package management system to enable re-use and distribution; and,

the REPL (read-evaluate-print loop) for exploring the language and testing code.

In this section, some of these aspects will be illustrated through code elements that will be used by all the nature inspired optimization methods presented in this book.

Julia version 1.7 has been used for all the codes in this book. I have tried to ensure that the style guide for writing Julia code has been followed throughout.

1.5.1. The package and modules

The codes presented in this book are tangled into a Julia package called NatureInspiredOptimization and available from the author's github repository. This package can be added to your own Julia installation by entering the package manager system in Julia using the ] key and then using the add command with the URL of the git repository for the package. This will add not only the package specified but also any dependencies, i.e. other packages, specified by this package. Hitting the backspace key will exit the package manager. Once added, the package and any sub-packages it may define, can be accessed through the using Julia statement. This will be illustrated in the examples in this book.

Some external packages have been used in writing the code presented in the book. These include DifferentialEquations, Plots, JavaCall, and Printf. These will automatically be installed when installing the NatureInspiredOptimization package. The one exception is the Jacaranda package (see Section 3.2 below), written by the author and not currently registered in the Julia Registry. Therefore, this package needs to be added explicitly.

In summary, installing the NatureInspiredOptimization package can be achieved as follows:

$ julia julia> ] pkg> add https://gitlab.com/ericsfraga/Jacaranda.jl pkg> add https://github.com/ericsfraga/NatureInspiredOptimization.jl [...] (@v1.7) pkg> BACKSPACE julia> ^d $

Note that the Jacaranda package is found in a gitlab repository while the NatureInspiredOptimization package is on github.

1.5.2. Objective function

All optimization problems in this book will define an objective function. The methods implemented will all be based on the same signature for the objective function:

(z, g) = f(x; π)

where \(f\) is the name of the function implementing the objective function for the optimization problem, to be evaluated at the point \(x\) in the search space. The second optional argument, \(\pi\), will consist of a data structure of any type which includes parameters that are problem dependent. The function \(f\) is expected to return a tuple. The first entry in the tuple, \(z\), is the value of the objective function for single objective problems and a vector of values for multi-objective problems. The second element of the tuple, \(g\), is a single real number which indicates the feasibility of the point \(x\): \(g\le 0\) means the point is feasible; \(g>0\) indicates an infeasible point, with the magnitude of \(g\) ideally representing the amount of constraint violation, when this is possible. In determining the fitness of a point, see Section 2.2.3 below, both the value(s) of \(z\) and \(g\) will be used.

To allow for both single and multi-objective problems in the code that follows, the generic comparison operators \(\succ\) (\succ in Julia) and ≽ (\succeq in Julia) will be used. \(a \succ b\) means that \(a\) is better than \(b\) and \(a \succeq b\) means that \(a\) is at least as good as \(b\). For single objective minimization problems, which will be the case for the case studies presented later in the book, these operators correspond to the less than (<) and less than or equals (≤) comparisons:

1:≻(a :: Number, b :: Number) = a < b2:≽(a :: Number, b :: Number) = a ≤ b3:export ≻, ≽

This code segment illustrates several Julia features:

- the single line definition of a function using assignment;

- the definition of a binary operator so that ≻ or ≽ can be used as in

a≻bto compareaandb; - the use of generic types so that the same operator can be used for real valued numbers, integers, or combinations of these; and,

the use of

exportto make the operator available without needing to qualify it with the package name.

For multi-objective problems, the value \(z\) returned by the objective function will be a vector of values. When comparing multi-objective solutions, the comparison is based on dominance: a solution dominates another solution if the first one is at least as good in each individual value and strictly better for at least one value:

1: dominates(a, b) = all(a .≽ b) && any(a .≻ b)

This illustrates the use of broadcast, the dot operator, which asks Julia to apply the operator (or function) that follows the dot to each individual element in turn. So this code says that \(a\) dominates \(b\) if all the values of \(a\) are at least as good as the corresponding values of \(b\) and if any value of \(a\) is better than the corresponding value of \(b\).

Given the definition of dominance, we can now define an operator for comparison:

1: ≻(a :: Vector{T}, b :: Vector{T}) where T <: Number = dominates(a,b)

The important feature of this code is that we have defined the function to works for vectors so long as

- they are of the same type,

T, and - the type

Trepresents a number entity, such asFloat64orInt32.

Julia has a hierarchy of types defined.

The multiple dispatch feature of Julia will ensure that the proper comparison function is invoked when comparing objective function values for different points in the search.

1.5.3. Multiprocessing

An additional capability of Julia is multiprocessing, using multiple computers or computer cores simultaneously. Given the increasing prevalence of multi-core systems, including both desktop computers and laptops, it is reasonable to consider writing all code to make use of the extra potential computational power available.

In Julia, the Threads package which is part of the base system provides an easy to use interface to enable the use of multiple cores. The simplest construct, which I will be using in this book, is:

1:Threads.@threads for x ∈ acollection2:# do something(x)3:end

This executes the body of the for loop in parallel using as many threads as made available to Julia. Invoking Julia with the -t option tells Julia how many cores to use simultaneously:

julia -t N

where N is some number, usually less than or equal to the number of cores available on the computer, or by

julia -t auto

In this case, Julia itself will determine the number of cores to use automatically.

The advantage of the nature inspired optimization methods I will be presenting later in this book is that they are all based on populations of solutions. Therefore, the computation associated with the members of the population are easy to distribute amounts the computational cores available. The plant propagation algorithm implementation (see Section 2.5) uses threads to evaluate members of the new population in parallel.

1.6. Representation

Different mathematical problem formulations of the same problem may have an impact on the solution process. Examples exist for the Simplex method [Hall and McKinnon 2004]. The choice of formulation may be a consideration for some problems. Further, for a given formulation, there may be alternative representations of the decision variables [Fraga et al. 2018] and these may affect the performance of individual optimization methods. Tailoring the formulation or representation to the method used may prove beneficial [Salhi and Vazquez-Rodriguez 2014].

For a given formulation, the representation should be chosen taking into account the following considerations:

- The representation should be suitable for the optimization method or methods, enabling the implementation of the appropriate operations that are required by the methods. For instance, if the method is a genetic algorithm, the representation should be suitable for cross-over and mutation operators.

- The representation should cover the complete space of feasible solutions to the problem or, at worst, ensure that the desirable potential solutions can be represented.

- On the other hand, the representation should minimise the probability of the method's operators generating infeasible or undesirable solutions.

Multiple dispatch in Julia aids the process of considering different representations. For instance, different versions of the objective function implementation can be written that differ in the type of the first argument. This enables alternative representations without needing to change the implementation of the solution method. An example of this appeared recently [Fraga 2021c]. This latter example includes code in Julia using the Fresa implementation of a plant propagation algorithm [Fraga 2021b; Fraga 2021a] (see Section 2.5).

2. Nature inspired optimization methods

2.1. Introduction

This book considers a selection of nature inspired optimization methods, specifically a selection of methods which are based on the evolution of a population of solutions. Three different solvers are described and fully implemented. These three have been selected as being representative of the class of evolutionary population based methods but the selection is not meant to be comprehensive, merely illustrative. The implementations presented in the book have been motivated by presentation and pedagogical advantages as opposed to aiming for the most efficient or feature-full implementations. References to production codes will be made where available. However, the codes presented are fully working implementations, as will be demonstrated through application to each of the case studies included in this book.

The three methods presented are

- genetic algorithm, inspired by Darwinian evolution and survival of the fittest;

- particle swarm optimization, inspired by flocks of birds and swarms of bees, looking for food or resting places; and,

- plant propagation algorithm, inspired by the propagation method used by Strawberry plants.

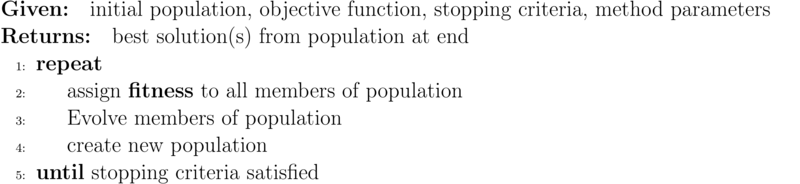

All three methods fundamentally follow the same basic approach, illustrated in Algorithm 1.

2.2. Shared functionality

All the methods presented in this chapter share some common functionality. This functionality is implemented in this section as a set of generic functions. These include fitness calculations for all the methods, including both single objective and multi-objective problems, selection procedures for those methods that use selection, and population management including function evaluation.

The codes in this section assume implicitly that the target optimization problem is one of minimization. If the problem considered were to be one of maximization, transformations of the objective function would be required. Many such transformations are possible including simply negating the value of the objective function.

2.2.1. Generic support functions

Some utility functions are defined in this section to aid in subsequent programming.

mostfitfind the most fit individual in the population and return that individual. As there may be more than one individual in the population with the same maximum fitness value, only the first one is returned. This is arbitrary but given the stochastic nature of the nature inspired optimization methods, it is not unreasonable.

1:

mostfit(p,ϕ) = p[ϕ .≥ maximum(ϕ)][1]φ is the vector of fitness values, a value for each member of the population

p.nondominatedreturn, from a population, those points which are not dominated.

1:

function nondominated(pop)2:nondom = Point[]3:for p1 ∈ pop4:dominated = false5:for p2 ∈ pop6:if p2 ≻ p17:dominated = true8:break9:end10:end11:if ! dominated12:push!(nondom, p1)13:end14:end15:nondom16:endThe key line in this code is line 6. This uses the previously defined better than operator to identify solutions that are dominated by others in the population. Note that this function is primarily intended for use for multi-objective optimization problems, the use of the ≻ operator, and multiple dispatch in Julia, results in code that works for single objective optimization problems as well.

printpointsprint out, row by row, the set of points in a population. As we are using

org modefor writing this book, it is helpful to have the output generated in a form that is convenient for post-processing in that mode.1:

function printpoints(name, points)2:println("#+name: $name")3:for p ∈ points4:printvector("| ", p.x, "| ")5:printvector("| ", p.z, "| ")6:printvector("| ", p.g, "| ")7:println()8:end9:endprintvectorprint, in compact form, the vector of real values, to be used mostly for demonstrator and test codes.

1:

function printvector(start, x, separator = "")2:@printf "%s" start3:for i ∈ 1:length(x)4:@printf "%.3f %s" x[i] separator5:end6:end- randompoint

It is often necessary to generate random points within the search domain. For instance, most evolutionary methods require an initial population. The arguments to this method are the lower and upper bounds of the hyper-box defining the search domain.

1:

randompoint(a, b) = a + (b - a) .* rand(length(a))statusoutputduring the evolution of a population, we may wish to monitor the performance. This function outputs periodically the number of function evaluations and the current most fit solution (see above). The frequency of output decreases with the increase in magnitude of the number of function evaluations performed.

1:

function statusoutput(2:output, # true/false3:nf, # number of function evaluations4:best, # population of Points5:lastmag, # magnitude of last output6:lastnf # nf at last output7:)8:if output9:z = best.z10:if length(z) == 111:z = z[1]12:end13:δ = nf - lastnf14:mag = floor(log10(nf))15:if mag > lastmag16:println("evolution: $nf $z $(best.g)")17:lastmag = mag18:lastnf = nf19:elseif δ > 10^(mag)-120:println("evolution: $nf $z $(best.g)")21:lastnf = nf22:end23:end24:(lastmag, lastnf)25:end

2.2.2. A point in the search domain

We define a Point type to provide a generic interface for the handling of members of a population. The structure includes the actual representation of the solution (i.e. the point in the search domain), the objective function values for the point, and the measure of feasibility. It is assumed in all the implementations of the solvers that the existence of a Point means that the objective function has been evaluated for that point in the search space, reducing the amount of unnecessary computation.

1:struct Point2:x :: Any # decision point3:z :: Vector # objective function values4:g :: Real # constraint violation5:function Point(x, f, parameters = nothing)6:# evaluate the objective function,7:# using parameters if given8:if parameters isa Nothing9:z, g = f(x)10:else11:z, g = f(x, parameters)12:end13:# check types of returned values14:# and convert if necessary15:if g isa Int16:g = float(g)17:end18:if rank(z) == 119:# vector of objective function values20:new(x, z, g)21:elseif rank(z) == 022:# if scalar, create size 1 vector23:new(x, [z], g)24:else25:error( "NatureInspiredOptimization" *26:" methods can only handle scalar" *27:" and vector criteria," *28:" not $(typeof(z))." )29:end30:end31:end

The type is a structure with the three values and a function known as the constructor. The constructor function, in this case, takes the point x, the objective function f, and the optional parameters and evaluates the objective function at that point. The resulting objective function values and the measure of feasibility are saved along with the point.

The constructor uses the rank function, defined here:

1: rank(x :: Any) = length(size(x))

This function is used to check for scalar versus vector values returned by the objective function. We ensure that the objective function values stored are always a vector. This will make handling the results easier and more generic.

In the evolutionary methods implemented below, I will wish to compare different points in the search domain. This function builds on the comparison operators defined above to allow us to compare Point objects:

1: ≻(a :: Point, b :: Point) = (a.g ≤ 0) ? (b.g > 0.0 || a.z ≻ b.z) : (a.g < b.g)

which says that point a is better than point b if a is feasible but b is not, both points are feasible and the objective function values for a are better than for b, or both are infeasible but a has a lower measure of infeasibility.

This function uses the ternary operator ? which is an in-line if-then-else statement.

2.2.3. Fitness calculations

For single objective functions, the fitness of solutions will be a normalised value, \(\phi \in [0,1]\), with a fitness of 1 being the most fit and 0 the least fit. In practice, we will limit the fitness to be \(\phi \in (0,1)\) to avoid edge cases with the boundaries for, at least the PPA, which uses the fitness value directly in identifying new solutions.

The fitness calculations depend on the number of objectives. For single objective problems, the fitness is the objective function values normalised and reversed. The best solution will have a fitness value close to 1 and the worst solution a fitness value close to 0. For multi-criteria problems, a Hadamard product of individual criteria rankings is used to create a fitness value [Fraga and Amusat 2016] with the same properties: more fit, i.e. better, solutions have fitness values closer to 1 than less fit, or worse, solutions.

The fitness calculations also take the feasibility of the points into account. If both feasible and infeasible solutions are present in a population, the upper half of the fitness range, \([0.5,1)\), is used for the feasible solutions and the bottom half, \((0,0.5]\), for the infeasible solutions. If only feasible or infeasible solutions are present, the full range of values is used for whichever case it may be. This simultaneous consideration of feasible and infeasible solutions in a population is motivated by the sorting algorithm used in NSGA-II [Deb 2000].

This function uses a helper function, defined below, to assign a fitness to a vector of objective function values.

1:function fitness(pop :: Vector{Point})2:l = length(pop)3:# feasible solutions are those with g ≤ 04:indexfeasible = (1:l)[map(p->p.g,pop) .<= 0]5:# infeasible have g > 06:indexinfeasible = (1:l)[map(p->p.g,pop) .> 0]7:fit = zeros(l)8:# the factor will be used to squeeze the9:# fitness values returned by vectorfitness into10:# the top half of the interval (0,1) for11:# feasible solutions and the bottom half for12:# infeasible, if both types of solutions are13:# present. Otherwise, the full interval is14:# used.15:factor = 116:if length(indexfeasible) > 017:# consider only feasible subset of pop18:feasible = view(pop,indexfeasible)19:# use objective function value(s) for ranking20:feasiblefit = vectorfitness(map(p->p.z,feasible))21:if length(indexinfeasible) > 022:# upper half of fitness interval23:feasiblefit = feasiblefit./2 .+ 0.524:# have both feasible & infeasible25:factor = 226:end27:fit[indexfeasible] = (feasiblefit.+factor.-1)./factor28:end29:if length(indexinfeasible) > 030:# squeeze infeasible fitness values into31:# (0,0.5) or (0,1) depending on factor,32:# i.e. whether there are any feasible33:# solutions as well or not34:infeasible = view(pop,indexinfeasible)35:# use constraint violation for ranking as36:# objective function values although it37:# should be noted that the measure of38:# infeasibility may not actually make any39:# sense given that points are infeasible40:fit[indexinfeasible] = vectorfitness(map(p->p.g, infeasible)) / factor;41:end42:fit43:end

This code illustrates the use of some functional programming aspects of Julia. In lines 4 and 6, the map function is used to look at the value of g for each member of the population. The comparison within [] generates a vector of true or false values and this vector is used to select the indices from the full set, \(\{1, \ldots, l\}\), where \(l\) is the length of the vector of points.1 On line 18, this vector of indices for the feasible points (if any) is used to create a view into the original population vector consisting purely of the feasible points. Then, on line 20, the map function is used again to look at the objective function values and a fitness value is assigned using the helper function vectorfitness, below. Finally, on line 27, the indices for the feasible points are used to record the fitness values in the vector that will be returned by the fitness function.

The following helper function works with a single vector of objective function values, each of which may consist of single or multiple objectives. For single objective problems, the fitness is simply the normalised objective function value. For multi-objective cases, the fitness is based on the Hadamard product of the rank of each member of population according to each criterion [Fraga and Amusat 2016]. This function uses yet another helper function, adjustfitness, which restricts the fitness values to \((0,1)\).

1:function vectorfitness(v)2:# determine number of objectives (or3:# pseudo-objectives) to consider in ranking4:l = length(v)5:if l == 16:# no point in doing much as there is only7:# one solution8:[0.5]9:else10:m = length(v[1]) # number of objectives11:if m == 1 # single objective12:fitness = [v[i][1] for i=1:l]13:else # multi-objective14:# rank of each solution for each15:# objective function16:rank = ones(m,l);17:for i=1:m18:rank[i,sortperm([v[j][i] for j=1:l])] = 1:l19:end20:# hadamard product of ranks21:fitness = map(x->prod(x), rank[:,i] for i=1:l)22:end23:# normalise (1=best, 0=worst) while24:# avoiding extreme 0,1 values using the25:# hyperbolic tangent26:adjustfitness(fitness)27:end28:end

Line 18 ranks each solution according to each individual objective function: the values for the solutions for a given objective function are sorted using sortperm and the rank assigned as a value between 1 and the number of solutions. Then, on line 21, the different rankings are combined into a single fitness value for each solution using the Hadamard product.

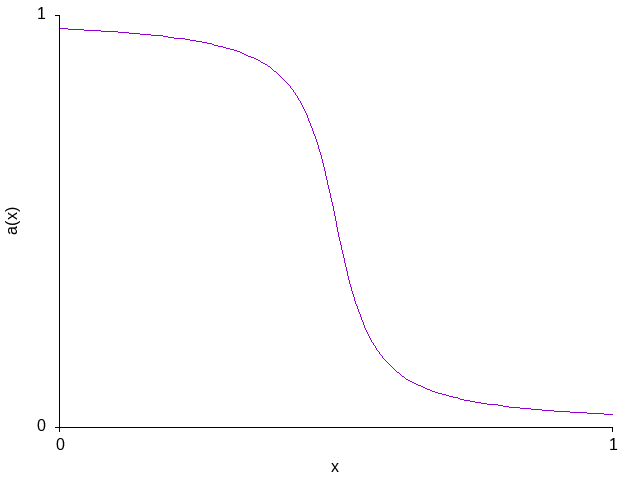

The fitness should be a value ∈ (0,1), i.e. not including the bounds themselves as those values may cause edge effects when the fitness value is used directly to define new points in the search domain. Therefore, we adjust the fitness values to ensure that the bounds are not included. The side effect of this adjustment, using the tanh function [Salhi and Fraga 2011], is that the fitness is given a sigmoidal shape, steepening the curve around the middle range of fitness values. This potentially affects those methods that use the actual fitness value for the generation of new points in the search space [Vrielink and van den Berg 2021b; Vrielink and van den Berg 2021a] but does not affect the relative ranking of points in the population.

1:function adjustfitness(fitness)2:if (maximum(fitness)-minimum(fitness)) > eps()3:0.5*(tanh.(4*(maximum(fitness) .- fitness) /4:(maximum(fitness)-minimum(fitness)) .- 2)5:.+ 1)6:else7:# if there is only one solution in the8:# population or if all the solutions are9:# the same, the fitness value is halfway10:# in the range allowed11:0.5 * ones(length(fitness))12:end13:end

2.2.3.1. Test the fitness method

Later, I will present a case study involving the design a process for purification of chlorobenzene (Chapter 3). This case study has two criteria for assessing the design: the capital cost and the operating cost. Therefore, the fitness has to consider both objectives. Consider a population consisting of process designs at some particular point, x0, at the lower bounds on all variables, at the upper bounds, and at a point midway between the bounds. This code snippet evaluates the fitness at these four points:

1:using NatureInspiredOptimization: Point, fitness2:using NatureInspiredOptimization.Chlorobenzene: process, evaluate3:flowsheet, x0, lower, upper, nf = process()4:population = [5:Point(x0, evaluate, flowsheet)6:Point(lower, evaluate, flowsheet)7:Point(upper, evaluate, flowsheet)8:Point(lower + (upper-lower)/2.0, evaluate, flowsheet)9:]10:fitness(population)

The results of the bi-criteria fitness calculation using the Hadamard product are:

| 0.807216925065357 |

| 0.9820137900379085 |

| 0.01798620996209155 |

| 0.4822791408831034 |

The fitness values have been calculated based solely on the measure of infeasibility because all the solutions are infeasible. If the solutions considered were to include a feasible point, the results will differ. From a previous study of the chlorobenzene process, a known feasible solution can be evaluated:

1:using NatureInspiredOptimization.Chlorobenzene: process, evaluate2:flowsheet, x0, lower, upper, nf = process()3:4:evaluate([1.3303962289290255:0.86574560560833786:17:1.2173537128058718:0.99524655243847779:1.2523035637552410:1.44859348748093611:0.96955390109404212:2.080117425247644], flowsheet)

and which has objective function values

([2.15517966714251e6, 1.7474586736116146e6], 0.0)

with a 0.0 value for the constraint violation, indicating that this solution is indeed feasible. If we now evaluate a population that includes this solution as well,

1:using NatureInspiredOptimization: Point, fitness2:using NatureInspiredOptimization.Chlorobenzene: process, evaluate3:flowsheet, x0, lower, upper, nf = process()4:population = [5:Point(x0, evaluate, flowsheet)6:Point(lower, evaluate, flowsheet)7:Point(upper, evaluate, flowsheet)8:Point(lower + (upper-lower)/2.0, evaluate, flowsheet)9:Point([1.33039622892902510:0.865745605608337811:112:1.21735371280587113:0.995246552438477714:1.2523035637552415:1.44859348748093616:0.96955390109404217:2.080117425247644], evaluate, flowsheet)18:]19:fitness(population)

the fitness values are:

| 0.4036084625326785 |

| 0.4910068950189542 |

| 0.008993104981045774 |

| 0.2411395704415517 |

| 0.875 |

All the infeasible solutions have been assigned fitness values in the lower half of the range, \((0,0.5)\), and the single feasible solution has been given a fitness value in the top half of the range. Although the fitness values of the infeasible solutions differ from the previous example, their relative ranking remains the same within the set of infeasible members.

2.2.4. Selecting points based on fitness

Nature inspired optimization methods often require selecting one or more members of a population, such as for creating new solutions for the next generation based on current solutions. This selection will typically be based on the fitness: the more fit a solution is, the more likely it is to be selected. There are many methods available for selection including, for instance, the best in the population, a roulette wheel analogue, and tournament selection [Goh et al. 2003]. In this book, we will be using the tournament selection, with a tournament size of 2 as we have found this to be a good choice for problems in process systems engineering.

The function implemented below returns the index into the population for the selected point. The argument is the vector of fitness values, φ.

1:function select(ϕ)2:n = length(ϕ)3:@assert n > 0 "Population is empty"4:if n ≤ 15:16:else7:i = rand(1:n)8:j = rand(1:n)9:ϕ[i] > ϕ[j] ? i : j10:end11:end

Note the use of @assert to catch a situation that should not happen: attempting to select from an empty population. @assert expects a condition and an optional error message to output if the condition is not satisfied.

2.2.5. Quantifying the quality of multi-objective solutions

The fitness calculations, above, section 2.2.3, are suitable for multi-objective optimization problems because they incorporate all the objectives in the calculation of the fitness. However, we need to be able to quantify a measure of the quality of the population obtained by each method if we wish to compare the performance of different methods. For single objective problems, the objective function value of the best solution in the final population gives us that measure. For multicriteria problems, no such single value is present in the population of points returned by any method.

The equivalent of best for a multicriteria problem is the set of non-dominated points (see Section 2.2.1 for the definition and implementation). The best outcome obtained is the approximation to the Pareto frontier. This approximation is the set of all the points in the final population that are not dominated. Ideally, a measure of the quality of the set of non-dominated points returned by a method will be based on how close the set is to this frontier and how well the frontier is represented in terms of breadth [Riquelme et al. 2015]. However, as the actual frontier is not known, any quantification will need to be relative, which may allow us to say whether one method is better than another for an individual case study or what combination of parameter values for a given method leads to the best outcomes.

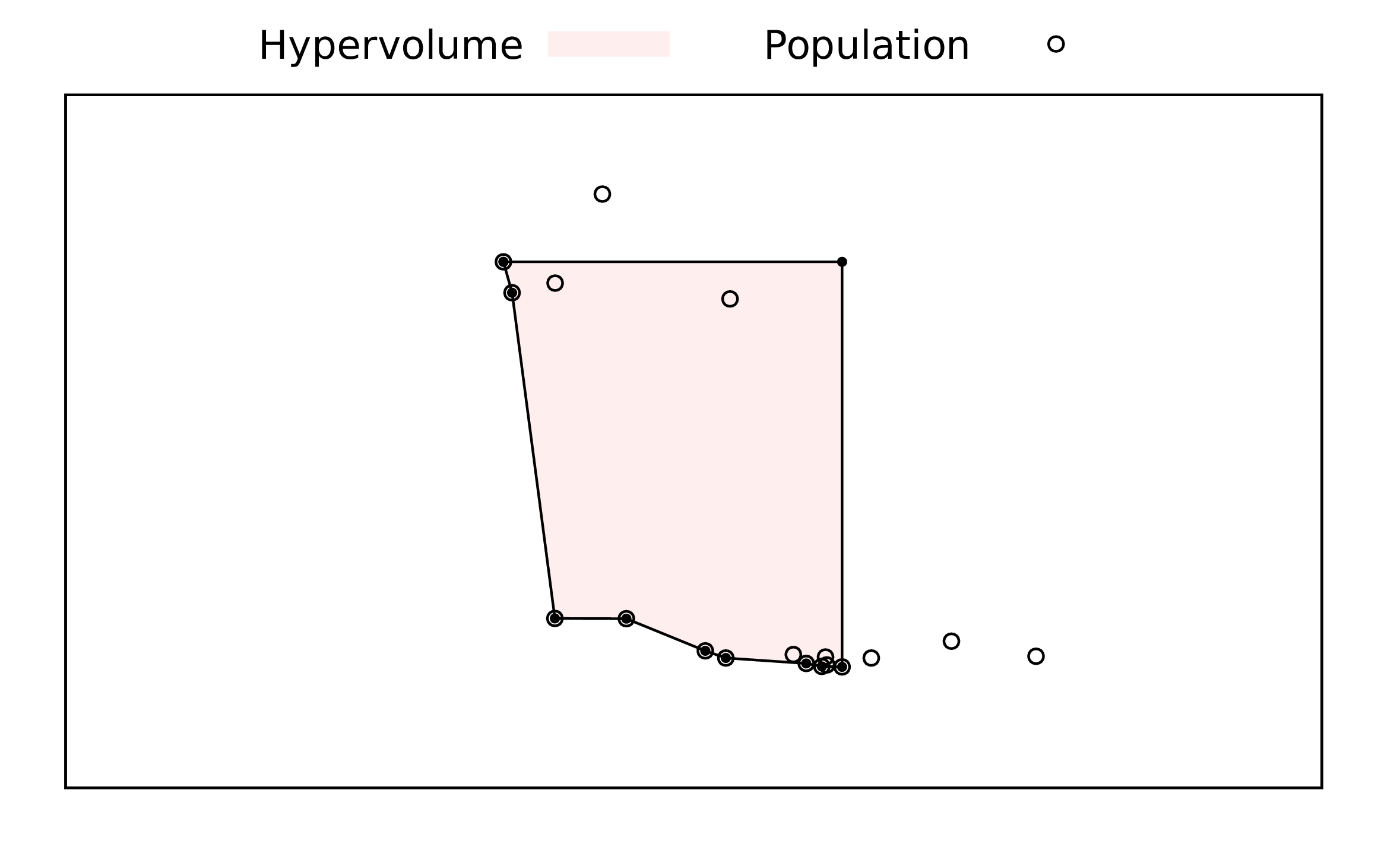

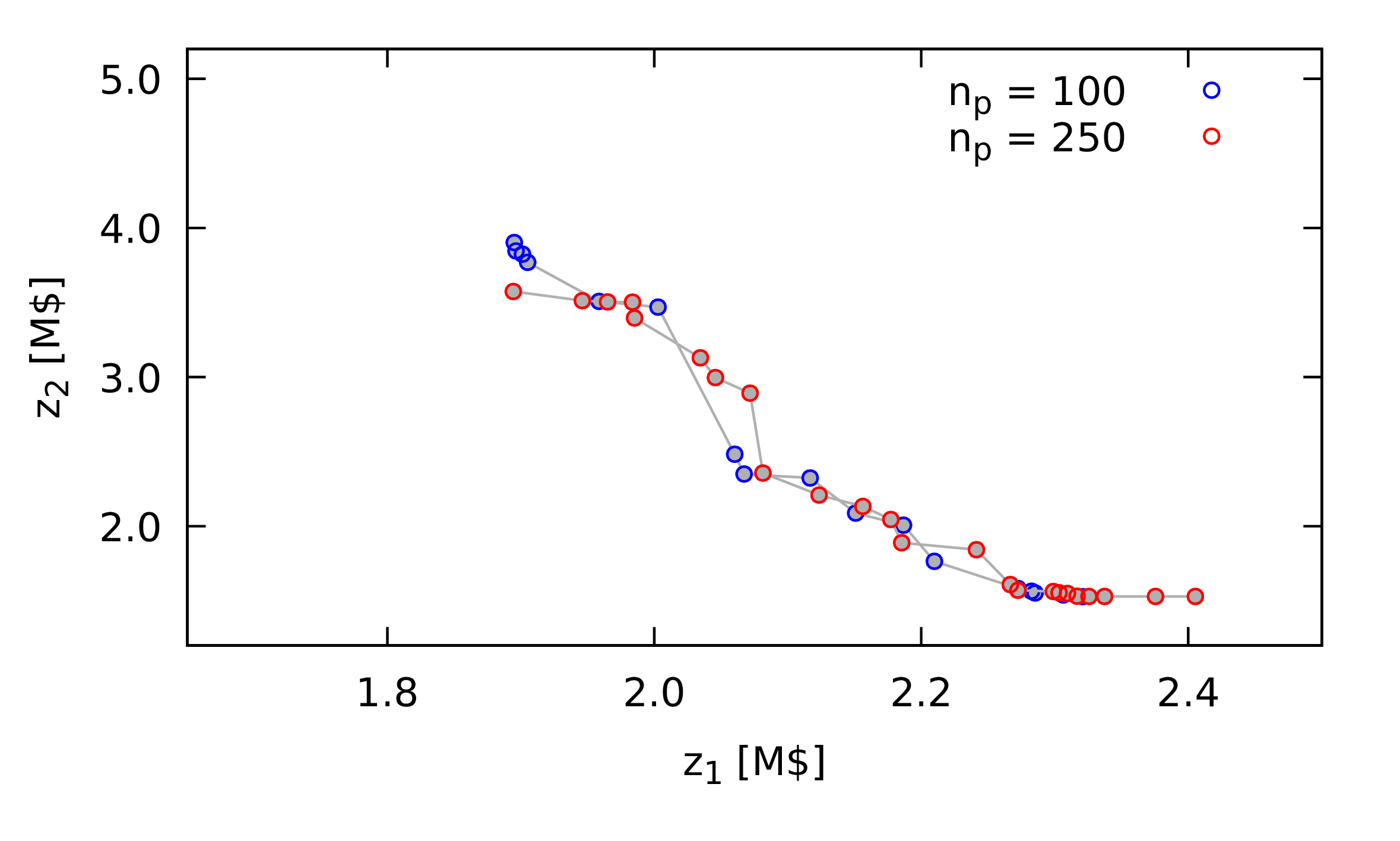

Figure 1: An illustration of the hyper-volume (area for bicriteria case) used as a measure of the quality of the solutions obtained. The circles are points in the population, plotted in objective function space with each axis corresponding to one criterion and with the assumption that each criterion is a value to be minimised. Those open circles that include a filled circle are non-dominated points, a set that is meant to be an approximation to the Pareto frontier. The shaded area is the hyper-volume to be calculated.

The method suggested in this book is an estimate of the hyper-volume described by a convex region defined by the set of non-dominated points and the extensions of the best points for each criterion. Figure 1 illustrates the hyper-volume for a bi-criteria problem, with the area measured shaded.

The hypervolume gives an indication of the breadth of the approximation to the Pareto frontier but it does not assess the quality of the approximation with respect to the actual frontier. To compare between methods, we also incorporate the distance to a utopia point. As this point is not known, we use a point that is in the right direction. For minimisation problems where the objective function values are all positive real values, we can use the origin as the utopia point.

The ratio of the hyper-volume to the distance to the utopia point, squared, is a measure that can be used to compare different methods. It captures the aspects that are of concern; whether the actual measure has any meaning is unclear.

The following code implements the calculation of the hypervolume, actually an area, and distance to the utopia point for bicriteria problems. The argument to the function is the set of non-dominated points in the population.

1:function hypervolume(points)2:m = length(points)3:n = length(points[1].z)4:set = Matrix{Real}(undef, m, n)5:for r ∈ 1:m6:set[r,1:n] = points[r].z7:end8:z = sortslices(set, dims=1, by=x->(x[1], x[2]))9:area = 0.010:last = z[m,n]11:first = z[1,1]12:distance = sqrt(z[1,1]^2 + z[1,2]^2)13:for i ∈ 2:m14:area += (z[i,1]-z[i-1,1]) * ((z[1,2]-z[i-1,2])+(z[1,2]-z[i,2])) / 2.015:d = sqrt(z[i,1]^2 + z[i,2]^2)16:if d < distance17:distance = d18:end19:end20:# maximise area, minimise distance21:# larger outcome better22:area/distance^223:end

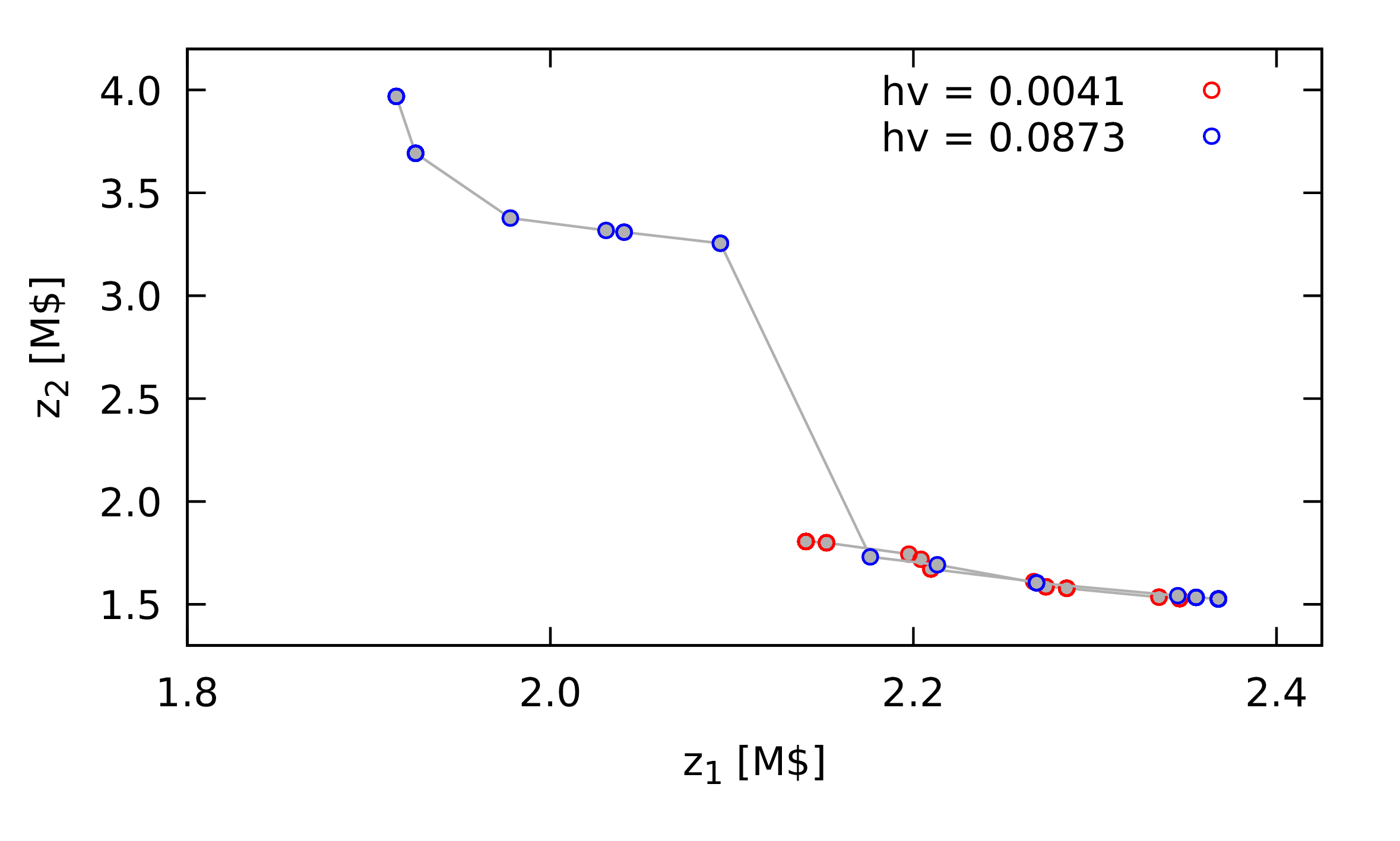

Figure 2: Comparison of set of non-dominated points obtained using a nature inspired method with different outcomes, illustrating the difference between an outcome with a low hypervolume measure (\(hv = 0.0041\), in red) and an outcome with a higher hypervolume measure (\(hv = 0.0873\), in blue). \(z_1\) and \(z_2\) are the two objective function values, with the goal of minimising both.

Later, I will present the application of nature inspired methods for a chemical process design problem. Figure 1 shows two different outcomes from such a method. In red, bottom right of the plot, is the less desirable outcome, as compared with the outcome in blue which has greater breadth and similar quality in terms of actual any single combination of objective function values obtained.

2.3. Genetic Algorithms

Genetic algorithms are inspired by Darwinian evolution and, specifically, the survival of the fittest together genetic operators based on sexual reproduction and mutations [Holland 1975; Goldberg 1989; Goh et al. 2003; Katoch et al. 2021]. There are three key aspects that define a genetic algorithm:

- selection, based on fitness;

- crossover where new solutions are generated inspired by meiosis; and,

- mutation where a new solution is generated by a small modification to an existing solution.

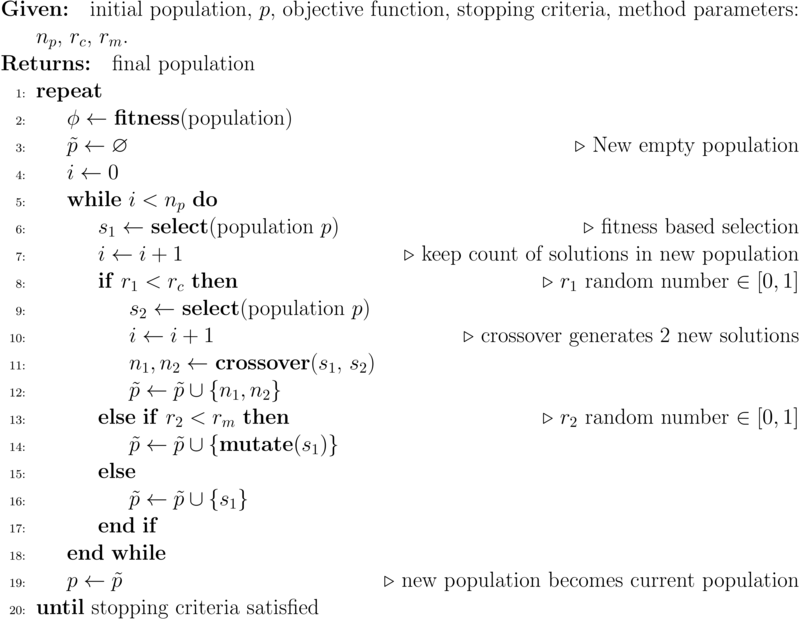

The basic algorithm is shown in Algorithm 2.

As presented, the algorithm does not include elitism. Elitism is a means of ensuring that a very good solution identified during the evolutionary procedure is not lost due to selection. In the implementation below, the best solution in any generation will automatically be included in the next population. This is known as elitism of size 1. For multi-objective optimization problems, the best solution will be one chosen arbitrarily from the set of non-dominated solutions, typically the solution that is the best with respect to one of the criteria. Other forms of elitism, especially for multi-objective problems, are possible and often desirable.

There are many variations on this algorithm, such as whether mutation should be applied potentially to the new solutions obtained by crossover and whether there should be a form of elitism where the best solutions in a population are guaranteed to be in the new population. Neither of those aspects are included in this algorithm. The aim of this book is not to explore the impact of the many variations possible but to illustrate the commonalities and differences between different nature inspired evolutionary population based methods.

The stopping criterion for this method, as for the others presented in the next chapters, will be the number of function evaluations. Again, many different stopping criteria could be considered including the number of cycles or generations to perform, the total time elapsed, and whether the population is stable in the sense that there is no longer any improvement in the best solution or solutions. As one of the case studies has significant computational requirements, the chlorobenzene process design, the number of function evaluations is an appropriate stopping criterion. Although it does not take into account the overhead of the actual method, the three methods considered in this book will have similar overhead.

From the algorithm, the outcome will be based on the implementation of the select, crossover, and mutate functions as well as the values of the parameters: the population size, \(n_p\), the crossover rate, \(r_c\), and the mutation rate, \(r_m\). These functions and parameters will typically require adaptation to the particular problem. The select method, however, will be generic and has already been defined above in Section 2.2.4. The crossover and mutate methods need to be based on the specific representation of solutions so may need tailored implementations.

2.3.1. The crossover operation

Many problems are based on vectors of real numbered values for the decision variables. An example is the chlorobenzene process design problem already alluded to. Therefore, it makes sense to have a function for crossover that works on such representations. Again, multiple dispatch will ensure the appropriate implementation is chosen depending on the data types used to represent decision points.

Crossover may be single point, where all elements before that point are swapped, it may be two points, or it could in fact be considered value by value in the representation vector. We implement the latter in this book as, arguably, it is more general and does not impose any meaning into the order of the decision variables in the chromosome. A multi-point crossover subsumes both single and two point crossover methods.

1:function crossover(p1 :: Vector{T}, p2 :: Vector{T}) where T <: Number2:n = length(p1)3:@assert n == length(p2) "Points to crossover must be of same length"4:# generate vector of true/false values with5:# true meaning "selected" in comments that6:# follow7:alleles = rand(Bool, n)8:# first new solution (n1) consists of p1 with9:# selected alleles from p2; note that we need10:# to copy the arguments as otherwise we would11:# be modifying the contents of the arguments.12:n1 = copy(p1)13:n1[alleles] = p2[alleles]14:# the second new solution is the other way15:# around: p2 with selected from p116:n2 = copy(p2)17:n2[alleles] = p1[alleles]18:n1, n219:end

Note that we fully qualify the arguments to the function by data type. This will enable Julia to apply the appropriate crossover implementation depending on the data type for the representation used for points in the search space. Later we shall see how we implement a different crossover function for the TSP representation, a Path.

To test out this code, we create two vectors of real numbers and apply the crossover several times to these to see how the results vary:

1:using NatureInspiredOptimization: printvector2:using NatureInspiredOptimization.GA: crossover3:using Printf4:let x1 = rand(4), x2 = rand(4)5:@printf "\n "6:printvector("x1: ", x1)7:@printf "| "8:printvector("x2: ", x2)9:@printf "\n\n"10:for i ∈ 1:511:@printf "%d. " i12:n1, n2 = crossover(x1,x2)13:printvector("n1: ", n1)14:@printf "| "15:printvector("n2: ", n2)16:@printf "\n"17:end18:end

which generates output such as this:

x1: 0.210 0.587 0.845 0.819 | x2: 0.657 0.392 0.471 0.275 1. n1: 0.657 0.392 0.471 0.275 | n2: 0.210 0.587 0.845 0.819 2. n1: 0.657 0.587 0.845 0.819 | n2: 0.210 0.392 0.471 0.275 3. n1: 0.657 0.587 0.845 0.819 | n2: 0.210 0.392 0.471 0.275 4. n1: 0.657 0.587 0.471 0.819 | n2: 0.210 0.392 0.845 0.275 5. n1: 0.210 0.587 0.845 0.275 | n2: 0.657 0.392 0.471 0.819

2.3.2. The mutation operator

Mutation introduces new genetic material into the population by creating new solutions based on existing ones with a small change. In a bit-wise representation, a small change would be toggling one of the bits. For a real number vector representation, a small change has been defined by a random change to one element of the vector. The following code implements this, ensuring that the changed element remains within the bounds defined for the decision variables:

1:function mutate(p, lower, upper)2:m = copy(p) # passed by reference3:n = length(p) # vector length4:i = rand(1:n) # index for value to mutate5:a = lower[i] # lower bound for that value6:b = upper[i] # and upper bound7:r = rand() # r ∈ [0,1]8:# want r ∈ [-0.5,0.5] to mutate around p[i]9:m[i] = p[i] + (b-a) * (r-0.5)10:# check for boundary violations and rein back in11:m[i] = m[i] < a ? a : (m[i] > b ? b : m[i])12:m13:end

Of special note is line 2 where a copy of the specific point is made. Julia passes vectors, for instance, by reference so any modification of the argument will affect the variable in the calling function. In this case, we want to create a new point in the search space without changing the existing point. Therefore, we make a copy of the argument before modifying it.

Again, we can test out the mutation operator by creating an initial vector and applying the operator several times:

1:using NatureInspiredOptimization: printvector2:using NatureInspiredOptimization.GA: mutate3:using Printf4:let x = rand(5);5:@printf "\n "6:printvector("x: ", x)7:@printf "\n\n"8:for i ∈ 1:59:@printf "%d. " i10:n = mutate(x,11:zeros(length(x)),12:ones(length(x)))13:printvector("n: ", n)14:@printf "\n"15:end16:end

which generates output such as this:

x: 0.166 0.154 0.703 0.909 0.348 1. n: 0.626 0.154 0.703 0.909 0.348 2. n: 0.166 0.154 0.703 0.909 0.315 3. n: 0.166 0.154 0.703 0.909 0.729 4. n: 0.166 0.154 0.703 1.000 0.348 5. n: 0.166 0.154 0.730 0.909 0.348

2.3.3. The implementation

With the three key operations (select, crossover, mutate) defined, the genetic algorithm itself can be implemented:

1:using NatureInspiredOptimization: fitness, mostfit, Point, select, statusoutput2:function ga(3:# required arguments, in order4:p0, # initial population5:f; # objective function6:# optional arguments, in any order7:lower = nothing, # bounds for search domain8:upper = nothing, # bounds for search domain9:parameters = nothing, # for objective function10:nfmax = 10000, # max number of evaluations11:np = 100, # population size12:output = true, # output during evolution13:rc = 0.7, # crossover rate14:rm = 0.05) # mutation rate15:16:p = p0 # starting population17:lastmag = 0 # for status output18:lastnf = 0 # also for status output19:nf = 0 # count evaluations20:while nf < nfmax21:ϕ = fitness(p)22:best = mostfit(p,ϕ)23:newp = [best] # elite size 124:lastmag, lastnf = statusoutput(output, nf,25:best, lastmag,26:lastnf)27:i = 028:while i < np29:print(stderr, "nf=$nf i=$i\r")30:point1 = p[select(ϕ)]31:if rand() < rc # crossover?32:point2 = p[select(ϕ)]33:newpoint1, newpoint2 = crossover(point1.x, point2.x)34:push!(newp,35:Point(newpoint1, f, parameters))36:push!(newp,37:Point(newpoint2, f, parameters))38:nf += 239:i += 240:elseif rand() < rm # mutate?41:newpoint1 = mutate(point1.x, lower, upper)42:push!(newp,43:Point(newpoint1, f, parameters))44:nf += 145:i += 146:else # copy over47:push!(newp, point1)48:i += 149:end50:end51:p = newp52:end53:ϕ = fitness(p)54:best = mostfit(p,ϕ)55:lastmag, lastnf = statusoutput(output, nf,56:best,57:lastmag, lastnf)58:best, p, ϕ59:end

Given that this is the first full optimization method implemented in the book, it is worth describing many of the lines of code, by line number:

- This brings in the named functions and types from the main module, including the

fitnessandselectfunctions. - There are two required arguments for the

gamethod: the initial population,p0, and the objective function used to evaluate points in the search space. - This argument and the ones that follow are optional. We will see below how optional arguments are specified when the function is invoked. The values given here are the defaults for when the arguments are not specified.

- All the methods will use the same stopping criterion: a maximum number of evaluations of the objective function. So

nfkeeps count of how many evaluations have been invoked andnfmaxis the limit. - The genetic algorithm will be specified with a rate for crossover and a rate for mutation. On this line, a decision is made to cross the solution obtained, on the previous line, with another solution. Line 40 similarly checks to see if the selected solution should be mutated. If neither condition holds, line 46, the point selected is simply inserted in the new population,

newp. - For crossover, two solutions are required so a second solution is selected. The following lines apply the crossover function and add the resulting points to the population.

- For mutation, the already selected point is evaluated and added to the population.

- The GA function returns the best solution in the final population, the full population itself, and the fitness of that population. The latter two elements are useful for gaining insight into the performance of the evolution (e.g. diversity) and for debugging should it be necessary.

The implementation is based on some implicit decisions as well. For instance, many authors of genetic algorithms will apply the mutation operator potentially to each of the solutions obtained by the crossover operation. I have taken decision to not do this, noting that any solution that results from crossover will have a chance to be selected for mutating in the next generation. Note also that this implementation does not make use of the inherent parallelism: all the new points could be collected and evaluated in parallel at the end of each generation. The implementation for the plant propagation algorithm (Chapter 2.5 below) will do this, however. The aim of this book is not to achieve the most efficient implementations but to illustrate what is possible in Julia. Comparisons presented later will focus on the quality of the solutions obtained and not the computational performance.

2.4. Particle Swarm Optimization

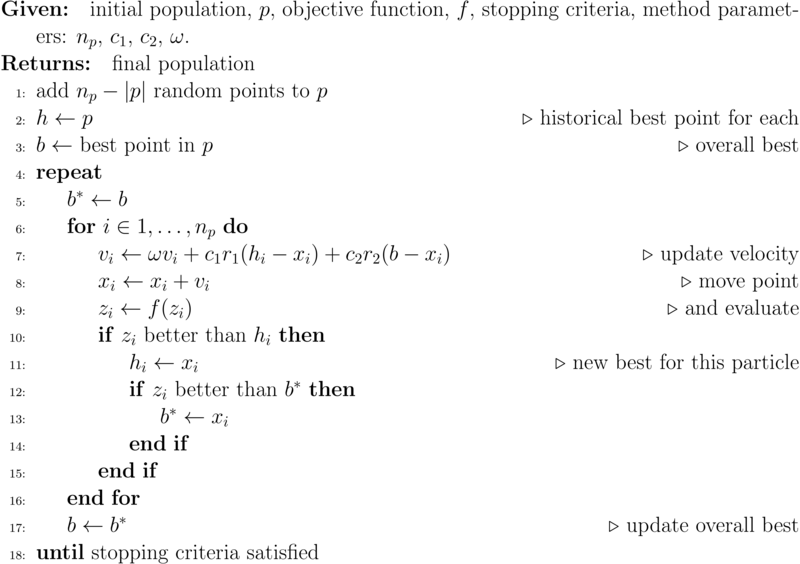

The original method was developed by Kennedy & Eberhart [Kennedy and Eberhart 1995]. The basic idea is that each particle (a solution to the optimization problem) in a swarm has a velocity, allowing it to move through the search space. This velocity is adjusted each iteration by taking into account each particle's own history, specifically the best point it has encountered to date, and the swarm's overall history, the best point encountered by all particles in the swarm. See Algorithm 1.

The equation for updating the velocity of each particle, \(i\), is

\begin{equation} \label{org2663845} v_i \gets \omega v_i + c_1 \left ( h_i - x_i \right ) + c_2 \left ( b - x_i \right ) \end{equation}where \(x_i\) is the current position of the particle, \(v_i\) its velocity, \(h_i\) the best place this particle has been (its historical best), and \(b\) the best point found by the whole population so far. The ω parameter provides inertia for non-zero values and the parameters \(c_1\) and \(c_2\) weigh the contributions from local history and global history towards the change in velocity.

Due to the calculations for updates to velocities and the use of the velocities to move the particles, this method is naturally directed at problems where the decision variables are real numbers. Although the method has been adapted to integer problems [Datta and Figueira 2011], the implementation here is for real-valued vectors as decision variables.

2.4.1. The implementation

The implementation has a similar layout to that for the genetic algorithm, consisting of a loop where the population of solutions is updated. It is simpler in that every member of the population is updated using the velocities and these velocities are updated continually by equation \eqref{org2663845}.

1:using NatureInspiredOptimization: ≻, Point, randompoint, statusoutput2:function pso(3:# required arguments, in order4:p0, # initial population5:f; # objective function6:# optional arguments, in any order7:lower = nothing, # bounds for search domain8:upper = nothing, # bounds for search domain9:parameters = nothing, # for objective function10:nfmax = 10000, # max number of evaluations11:np = 10, # population size12:output = true, # output during evolution13:c₁ = 2.0, # weight for velocity14:c₂ = 2.0, # ditto15:ω = 1.0 # inertia for velocity16:)17:18:# create initial full population including p019:p = copy(p0)20:for i ∈ length(p0)+1:np21:push!(p, Point(randompoint(lower, upper),22:f, parameters))23:end24:nf = np25:# find current best in population26:best = begin27:b = p[1]28:for i ∈ 2:np29:if p[i] ≻ b30:b = p[i]31:end32:end33:b34:end35:h = p # historical best36:v = [] # initial random velocity37:for i ∈ 1:np38:push!(v, (upper .- lower) .*39:( 2 * rand(length(best.x)) .- 1))40:end41:lastmag = 0 # for status output42:lastnf = 0 # as well43:while nf < nfmax44:lastmag, lastnf = statusoutput(output, nf,45:best,46:lastmag,47:lastnf)48:for i ∈ 1:np49:print(stderr, "nf=$nf i=$i\r")50:v[i] = ω * v[i] .+51:c₁ * rand() * (h[i].x .- p[i].x) .+52:c₂ * rand() * (best.x .- p[i].x)53:x = p[i].x .+ v[i]54:# fix any bounds violations55:x[x .< lower] = lower[x .< lower]56:x[x .> upper] = upper[x .> upper]57:# evaluate and save new position58:p[i] = Point(x, f, parameters)59:nf += 160:# update history for this point61:if p[i] ≻ h[i]62:h[i] = p[i]63:end64:# and global best found so far65:if p[i] ≻ best66:best = p[i]67:end68:end69:end70:lastmag, lastnf = statusoutput(output, nf, best,71:lastmag, lastnf)72:best, p73:end

Some notes, again by line number:

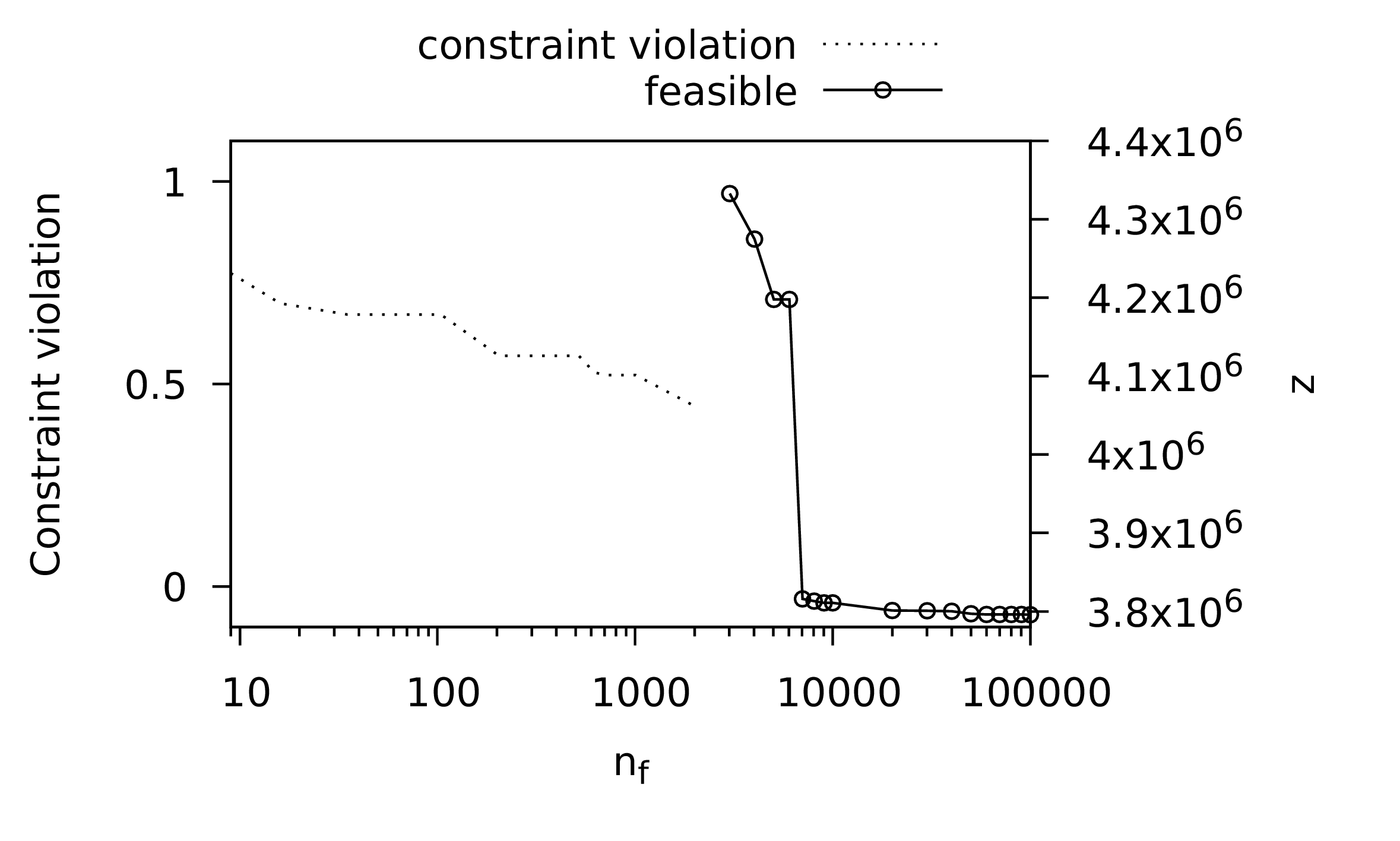

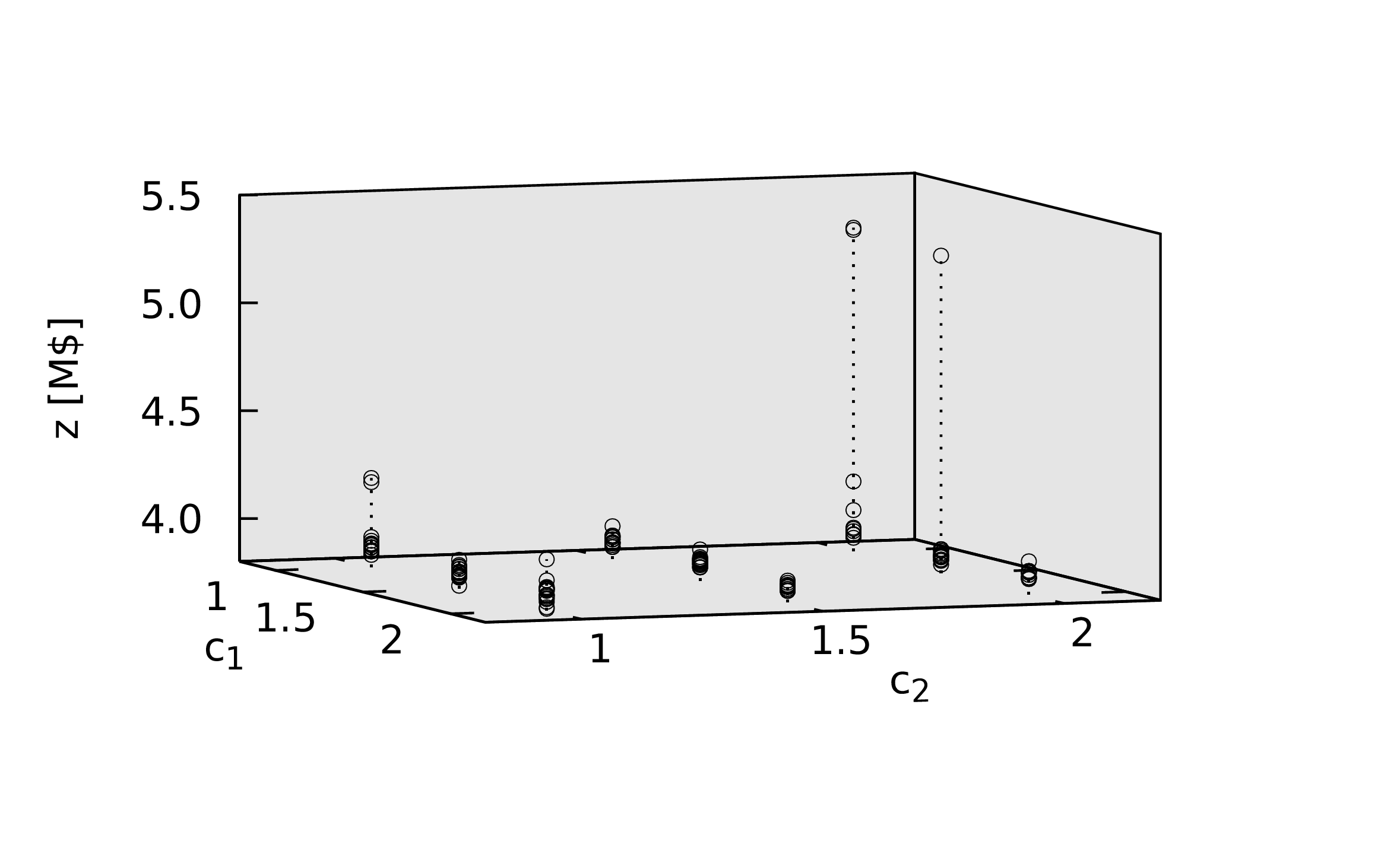

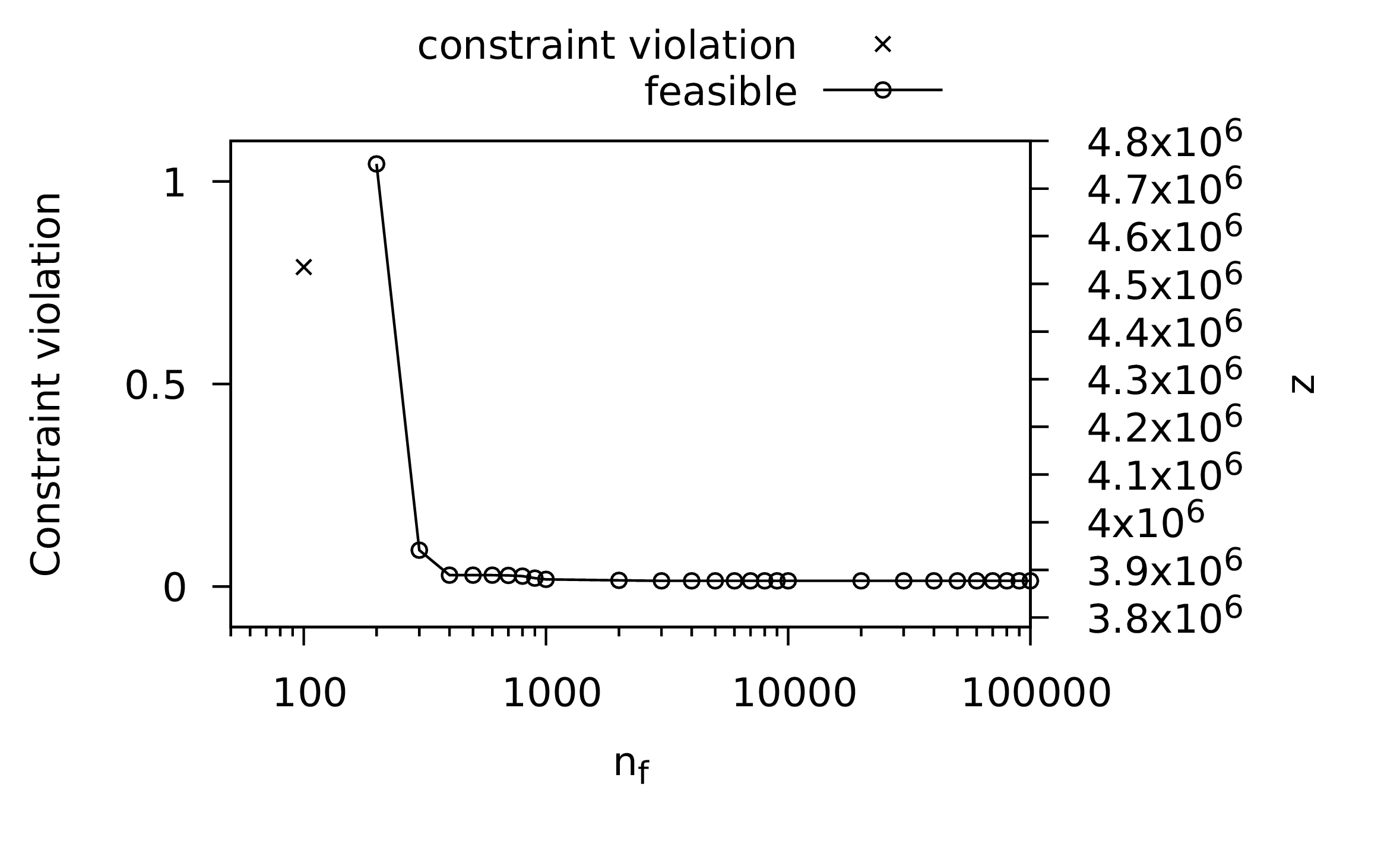

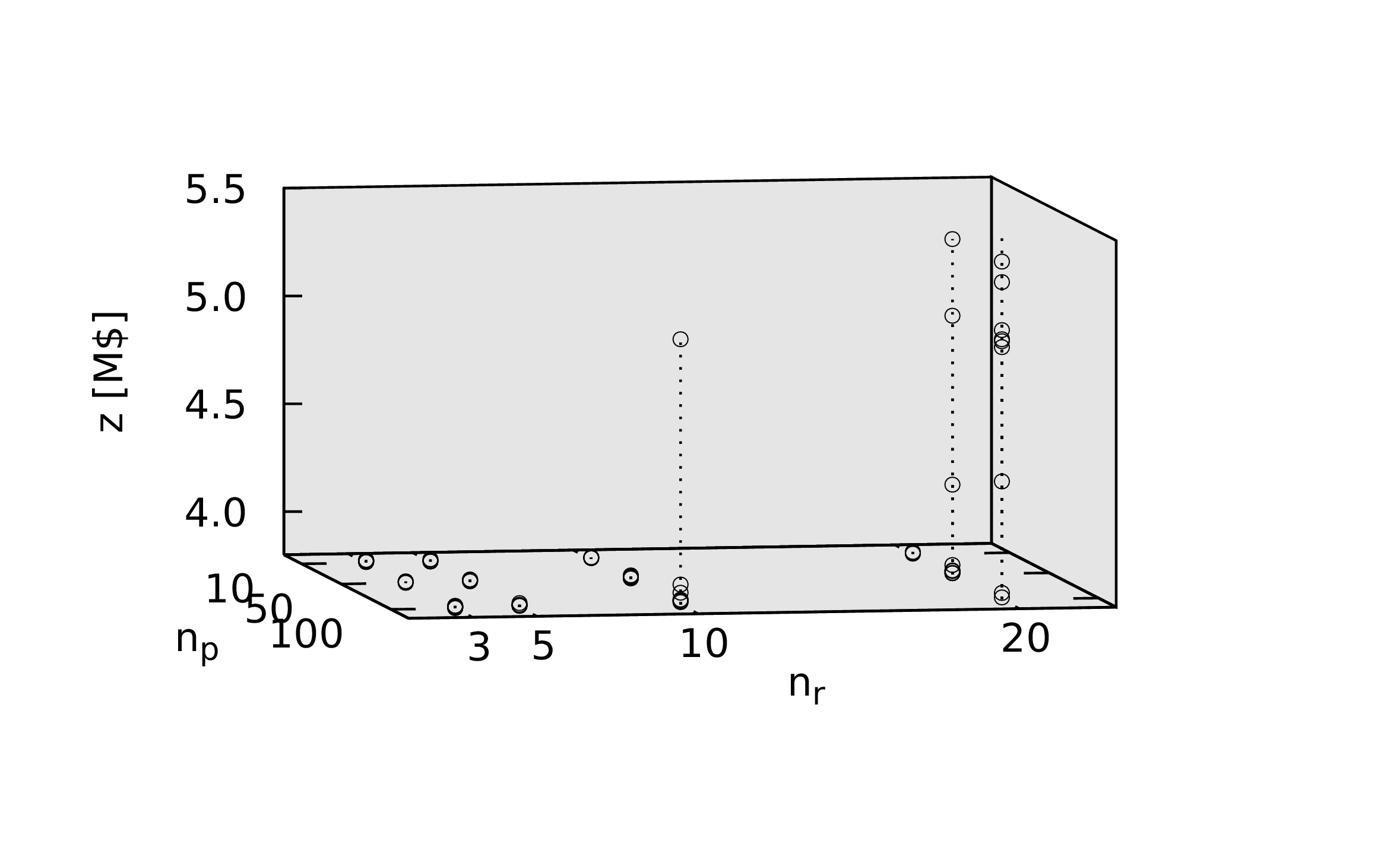

- The default values of the velocity update parameters are based on the analysis presented below in the first substantial case study and are in line with the original implementation [Kennedy and Eberhart 1995].

- This loop ensures that the population of points has the desired number of points. The PSO method differs from the others in that each individual in the population evolves but no new points are created. This is a subtle distinction but the implication is that the initial population must have the correct number of solutions.

- The initial velocity of each particle is randomly allocated.

- Velocity updated.

- Point moved according to the velocity with the following lines ensuring all values of the point are within the bounds for the search domain.

- This line and the ones that follow update both the point's own historical best as well as the overall population's best solution to date.

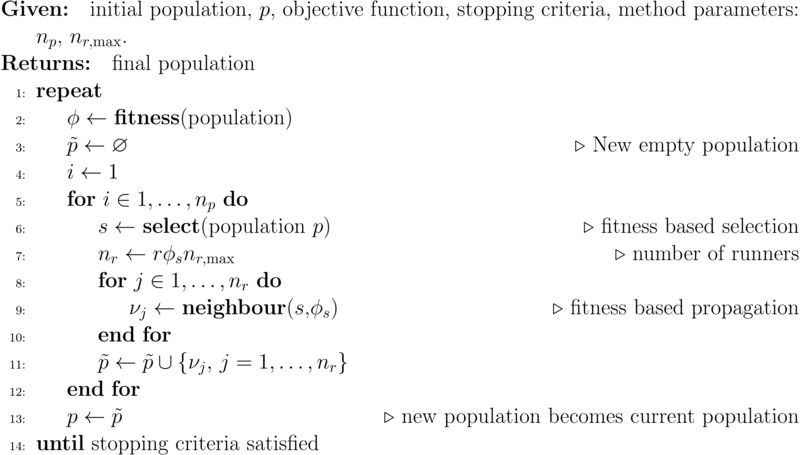

2.5. Plant Propagation Algorithms

The plant propagation algorithm (PPA) [Salhi and Fraga 2011] is inspired by one of the methods used by strawberry plants to propagate: the generation of runners. In fertile soil, strawberry plants send of a number of short runners to exploit the current surroundings. In less fertile soil, fewer yet longer runners propagate to explore more broadly. The PPA therefore creates new solutions, based on existing solutions in a population, by generating a number of runners. The number of runners is proportional to the fitness and the distance these runners propagate is inversely proportional to the fitness. This is similar in motivation as an adaptive scheme for mutation in genetic algorithms [Marsili Libelli and Alba 2000].

The PPA is shown in Algorithm 1.

The plant propagation algorithm depends on three support functions: fitness, select, and neighbour. The first two have already been implemented as generic functions suitable for any method. The last, however, is specific to the plant propagation algorithm.

2.5.1. Identifying neighbouring solutions

Propagation, whether in the close vicinity of an existing solution or further away, is defined through the neighbour function. This function is similar to the mutate function implemented for the genetic algorithm method. It differs in that the random change is a function of the fitness of the solution to propagate.

In the mutate method for the genetic algorithm, for the case of decision variables being represented by a vector of real values, a single value is chosen for change. For the neighbour function, all values are changed simultaneously, each with a different random number. In all cases, the amount to change each variable is adjusted by the fitness: the fitter the solution, the smaller the changes considered; the less fit the solution, the larger the changes allowed.

1:function neighbour(s, # selected point2:lower, # lower bounds3:upper, # upper bounds4:ϕ) # fitness5:r = rand(length(s))6:# element by element calculation7:ν = s + (upper - lower) .*8:((2 * (r .- 0.5)) * (1-ϕ))9:# check for bounds violations10:ν[ν .< lower] = lower[ν .< lower]11:ν[ν .> upper] = upper[ν .> upper]12:return ν13:end

Lines 7 and 8 create a new solution from the current selected solution by using the random numbers from line 5 and adjusting these through the fitness, φ. The new point is adjusted to ensure it remains within the bounds of the search domain in lines 10 and 11.

2.5.2. The implementation

With the neighbour function implemented, the full plant propagation algorithm can be written:

1:using NatureInspiredOptimization: fitness, mostfit, Point, select, statusoutput2:function ppa(3:# required arguments, in order4:p0, # initial population5:f; # objective function6:# optional arguments, in any order7:lower = nothing, # bounds for search domain8:upper = nothing, # bounds for search domain9:parameters = nothing, # for objective function10:nfmax = 10000, # max number of evaluations11:np = 10, # population size12:output = true, # output during evolution13:nrmax = 5) # maximum number of runners14:15:p = p0 # starting population16:lastmag = 0 # for status output17:lastnf = 0 # also for status output18:nf = 0 # function evaluations19:while nf < nfmax20:ϕ = fitness(p)21:best = mostfit(p,ϕ)22:newp = [best] # elite set size 123:lastmag, lastnf = statusoutput(output, nf,24:best,25:lastmag,26:lastnf)27:newpoints = []28:for i ∈ 1:np29:print(stderr, "nf=$nf i=$i\r")30:s = select(ϕ)31:nr = ceil(rand() * ϕ[s] * nrmax)32:for j ∈ 1:nr33:push!(newpoints,34:neighbour(p[s].x, lower,35:upper, ϕ[s]))36:nf += 137:end38:end39:Threads.@threads for x in newpoints40:push!(newp, Point(x, f, parameters))41:end42:p = newp43:end44:ϕ = fitness(p)45:best = mostfit(p,ϕ)46:lastmag, lastnf = statusoutput(output, nf,47:best,48:lastmag, lastnf)49:best, p, ϕ50:end

Again, this implementation has a similar layout as the previous two methods. The key differences are the following lines:

- The number of runners, i.e. new solutions to generate from an existing solution, is a random number up to \(n_{r,\max}\) adjusted by the fitness, \(\phi_s\). Recall that the fitness value is ∈ (0,1). The fitter the solution, the more runners are likely.

- The runners are created using the

neighbourfunction and stored in thenewpointsvector. The points are not evaluated here; the evaluation is postponed until line 38. All the points created in this overall loop are evaluated now, potentially in parallel through the use of Julia's

@threadsmacro. By default, only a single thread is available but Julia can be told to use multiple threads. See Section 1.5.3.

2.6. Random search

It is useful to have a control experiment to confirm that any or all of the above methods are indeed effective at search. Whether a fully random search could be considered a method inspired by nature or not, such a method is in the spirit of the other nature inspired methods in being stochastic. There are no parameters and the method relies on a function to generate new random solutions in the search space.

The actual search generates \(n_f\) random points, and to provide some statistics for comparison with the evolutionary methods, output is generated periodically with the best solution found so far.

1:using NatureInspiredOptimization: Point, statusoutput2:function randomsearch(3:# required arguments, in order4:f; # objective function5:# optional arguments, in any order6:lower = nothing, # bounds for search domain7:upper = nothing, # bounds for search domain8:parameters = nothing, # for objective function9:nfmax = 10000, # max number of evaluations10:output = true) # output during evolution11:12:lastmag = 0 # for status output13:lastnf = 0 # also for status output14:15:best = Point(randompoint(lower,upper), f,16:parameters)17:nf = 1 # function evaluations18:while nf < nfmax19:p = Point(randompoint(lower,upper),20:f, parameters)21:nf += 122:if p ≻ best23:best = p24:end25:lastmag, lastnf = statusoutput(output, nf,26:best,27:lastmag,28:lastnf)29:end30:lastmag, lastnf = statusoutput(output, nf,31:best,32:lastmag, lastnf)33:best34:end

2.7. Illustrative example

A simple benchmark problem is now present to illustrate how the nature inspired methods may be used. The benchmark problem is

\begin{align} \label{orga2aeda6} \min_x f(x) &= \sum_{i=1}^{d}\left( \sum_{j=1}^d \left (j^i - \beta \right) \left ( \left ( \frac {x_j} {j} \right )^i -1 \right ) \right )^2 \\ x & \in [-d, d]^d \subset \mathbb{R}^d \nonumber \end{align}where \(d\) is dimension of \(x\) and β typically 0.5. This is a continuous function defined on the full domain. It is smooth. As such, it is not the type of problem that requires black box optimization methods. However, this benchmark problem has been chosen to demonstrate the Julia code required to define a problem and solve it using the different solvers described previously.

The first step is to define a function that implements the objective function in Equation \eqref{orga2aeda6}:

1:function permdbeta(x, β = 0.5)2:d = length(x)3:z = sum( sum( (j^i + β)*((x[j]/j)^i - 1) for j ∈ 1:d )^2 for i ∈ 1:d )4:(z, 0)5:end

This code illustrates some elegant programming elements of Julia for working with vectors. The summation signs in Eq. \eqref{orga2aeda6} translate directly into Julia: line 3. For instance, \(\sum_{i=1}^n x_i\) may be written as

sum( x[i] for i in 1:n )

in Julia.

The first line illustrates the use of default values for positional arguments, as opposed to optional arguments, when those arguments are not specified when the function is invoked. In this case, if only one argument is given, i.e. x, the value of β defaults to 0.5. This is the value typically used as a benchmark.

The code also highlights, line 4, that the objective function must return a tuple consisting of the actual value of the objective function, z, along with an indication of constraint satisfaction: 0 or less for a feasible point, a value greater than 0 for infeasible with the actual value ideally a measure of how far from feasible the point may be. For this example, as the problem is feasible over the full domain, the second element of the tuple is always zero.

For all the methods, the following initialization is common:

1:using NatureInspiredOptimization: Point2:d = 4 # dimension3:x0 = zeros(d) # initial guess4:a = -d * ones(d) # domain5:b = d * ones(d) # domain6:p0 = [Point(x0,permdbeta)] # initial population

where the dimension of the problem is set to 4, an initial point at the origin is defined, and the lower and upper bounds of the search domain defined. The initial population, p0, consists of the initial point evaluated with the objective function.

The GA and PSO methods require an initial population that is of the size they expect to evolve. The PPA can start with a population of size 1. However, for consistency, all methods will be started with the same size initial population:

1:using NatureInspiredOptimization: randompoint2:n = 40 # population size3:while length(p0) < n4:push!(p0, Point(randompoint(a, b), permdbeta))5:end

Given the random initial population, we can now apply each of the methods. First, the genetic algorithm:

1:using NatureInspiredOptimization.GA: ga2:gabest, gapop = ga(p0, permdbeta;3:np = n,4:lower = a,5:upper = b,6:nfmax = 10_000_000)

Note that use of _ to denote separation of digits for large numbers, in line 2. 10_000_000 is much easier to read than 10000000 although, of course, we could have used exponential notation, 1e7.

The particle swarm optimization method is invoked similarly:

1:using NatureInspiredOptimization.PSO: pso2:psobest = pso(p0, permdbeta;3:lower = a,4:upper = b,5:np = n,6:nfmax = 10_000_000)

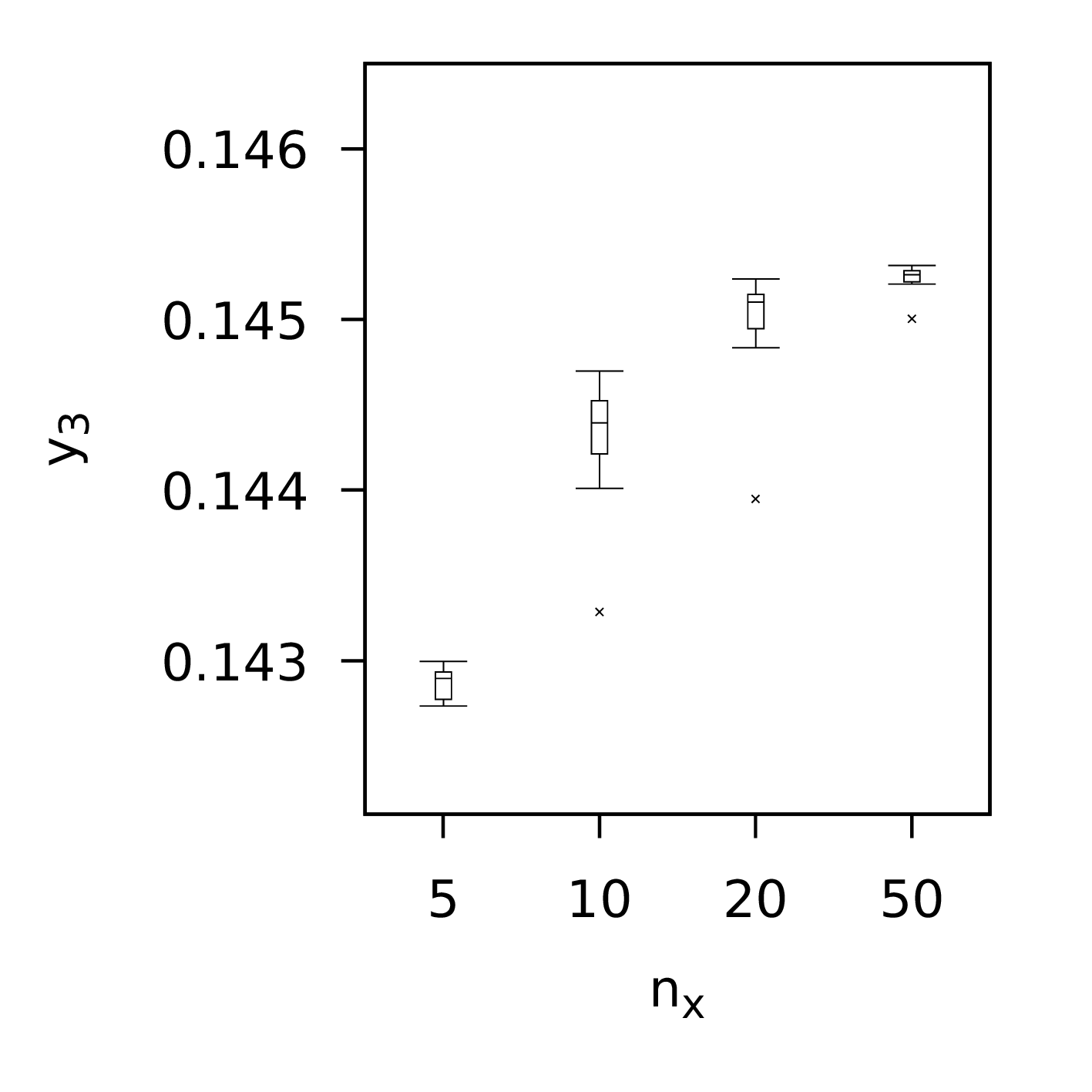

Next, the plant propagation algorithm which differs in that the population size, n, is smaller than that used for the other methods. This is partly because the PPA generates multiple new solutions from each solution chosen for propagation, on average 2.5-3 for each, and also because of the results of the analysis we present in the next section of this book:

1:n = 10 # population size2:using NatureInspiredOptimization.PPA: ppa3:ppabest, ppapop = ppa(p0, permdbeta;4:np = n,5:lower = a,6:upper = b,7:nfmax = 10_000_000)

Finally, as the control for the experiment, the random search method:

1:using NatureInspiredOptimization: randomsearch2:rsbest = randomsearch(permdbeta;3:lower = a,4:upper = b,5:nfmax = 10_000_000)

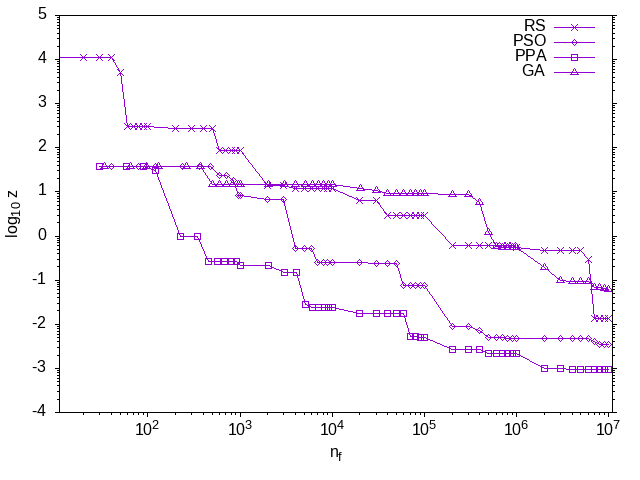

Figure 1: The evolution of the best solution in the current population for each of the three nature inspired optimization methods along with the random search. The profiles for all of the methods, except for the random search, share the starting point as the same initial random population was used. The profiles show that all the methods improve over time. The PPA has the fastest rate of decrease, followed by the PSO and GA methods. The random search is slower initially but actually finds a better solution than the GA by the end. Both axes are plotted in a log scale.

The results from the above code are summarised in Figure 1. This figure shows the evolution of the best solution in the population. The stochastic nature of the methods means that every time one of the methods is applied, the results will differ. When we present a number of case studies below, this stochastic nature will be taken into account by solving each problem multiple times and performing some statistical analysis. For this illustrative example, however, only one attempt has been considered for each method, simply to illustrate how the methods are used and what results may look like. No conclusions about the efficacy of any of the methods should be drawn from this one experiment. The focus of this book is not on benchmark problems such as Equation \eqref{orga2aeda6} but on problems which arise in process systems engineering, as we shall see in the next chapter.

3. Chlorobenzene purification process

The design and optimisation of process flowsheets is a challenging task due to the nonlinear models required and the multi-criteria nature of the objectives for evaluating alternative designs [Biegler et al. 1997; Grossmann and Kravanja 1995]. A process flowsheet consists of a number of processing steps, often referred to as units, with streams connecting the steps. The problem of design is to determine the operating conditions and sizing parameters for the units in the process to achieve desired objectives subject to a number of constraints, both physical (or chemical) and economic.

Process systems engineers often use simulation software to investigate the impact of design parameters, e.g. distillation column size, on their desired objectives. Automating the process requires interfacing optimization methods with simulation software. General modelling languages can be interfaced with Julia: see the OpenModelica interface as an example [Shitahun et al. 2013]. In this case study, the Jacaranda system [Fraga et al. 2000] is used as the simulation tool.

Optimization methods using a simulation system as the model for an optimization problem will need to be able to handle the noise introduced by simulation software due to iterative methods in simulation and convergence tolerances, the possible failure of simulation, and potentially discontinuous objective functions. Nature inspired optimization methods are therefore attractive for this type of optimization problem.

3.1. The process

The case study is the optimisation of a purification section in the production of chlorobenzene. In the overall process, large quantities of benzene are used. Due to the partial conversion in the reaction section of the process, significant quantities of un-reacted benzene would be wasted if not recycled. To recycle the benzene, a stream consisting of primarily benzene needs to be further purified to ensure that the benzene sent back upstream in the process is pure enough to not affect the reaction. The case study we consider here is the design of this purification stage of the overall process.

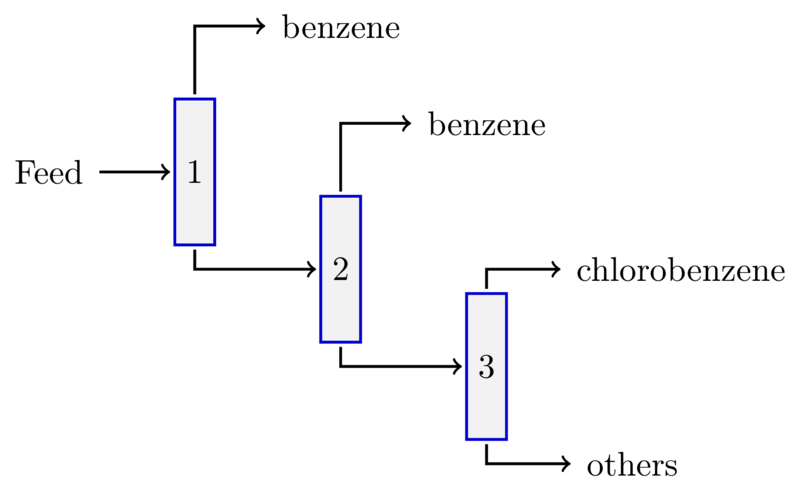

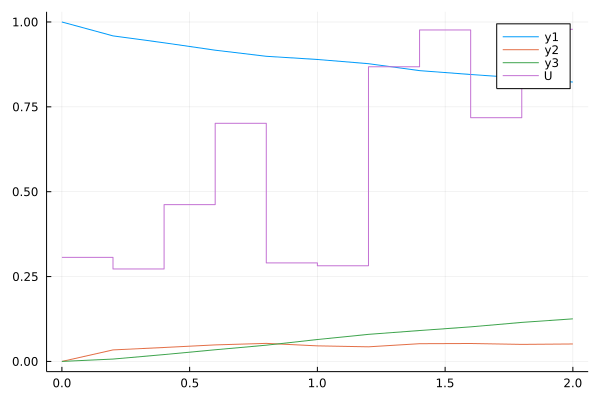

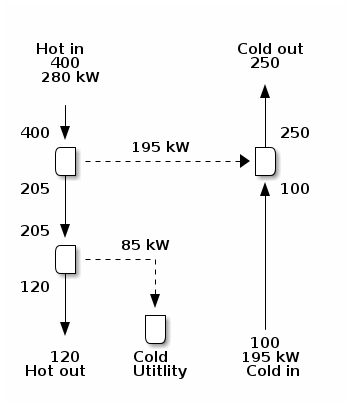

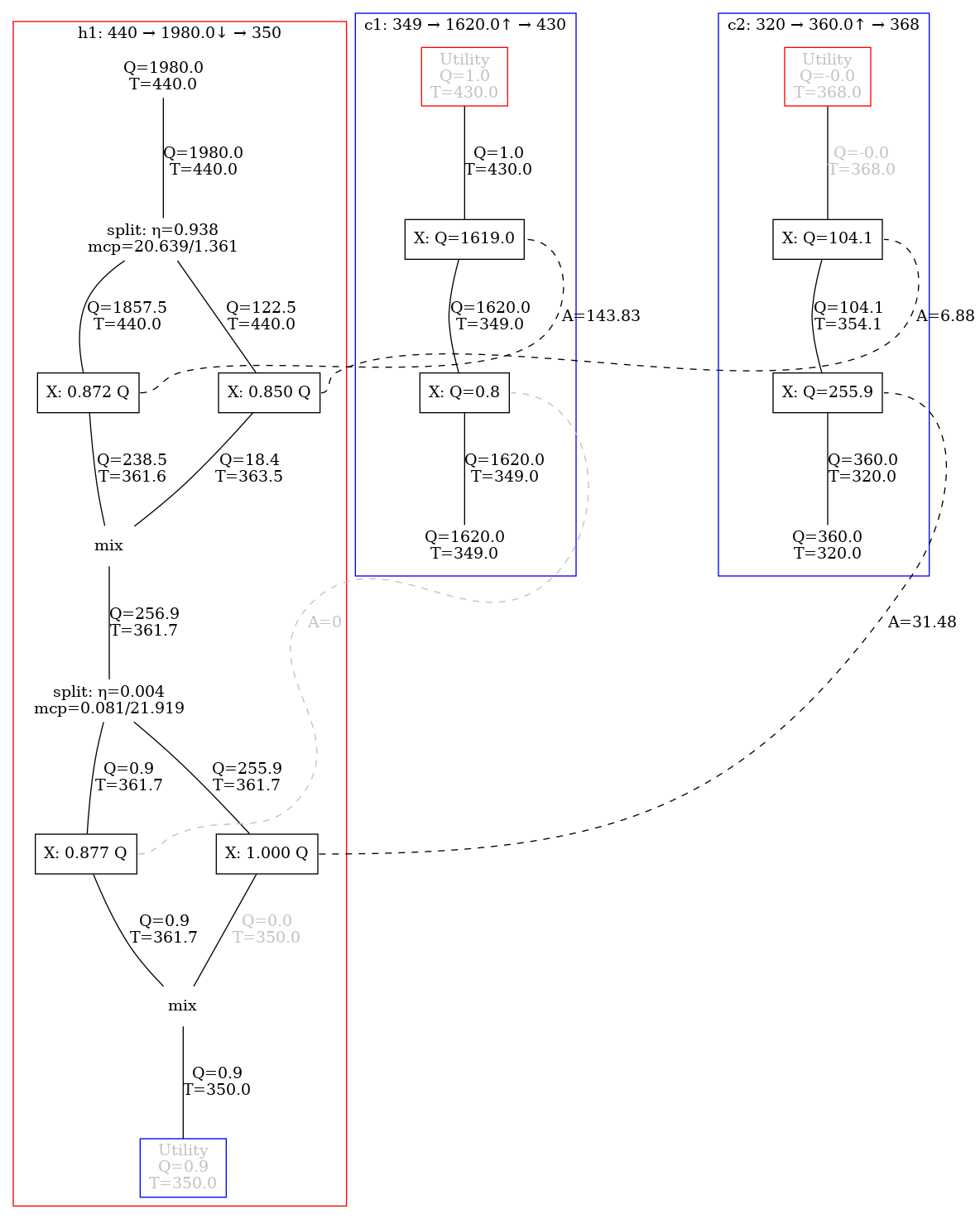

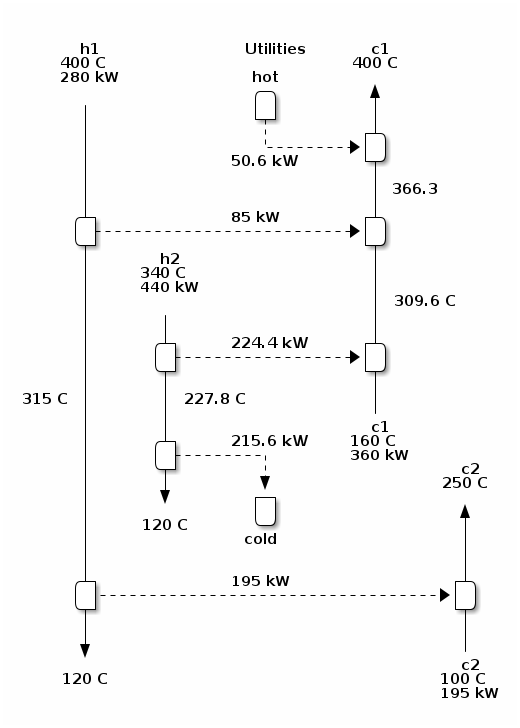

The stage comprises three distillation units with a feed stream described in Table 1. Although the main aim is to purify the benzene recycle stream, the feed stream to this stage also contains some amount of the main product of the process, chlorobenzene. It is desirable to separate this product as well in sufficiently pure form and to avoid unnecessary loss. The process structure we consider is presented in Figure 1.

| Component | Flow | ||

|---|---|---|---|

| kmol s-1 | |||

| 1. | Benzene | \(C_6H_6\) | 0.97 |

| 2. | Chlorobenzene | \(C_6H_5Cl\) | 0.01 |

| 3. | Di-Chlorobenzene | p-\(C_6H_4Cl_2\) | 0.01 |

| 4. | Tri-Chlorobenzene | \(C_6H_3Cl_3\) | 0.01 |

| Pressure | 1 atm | ||

| Temperature | 313 K |

Figure 1: Process structure for the chlorobenzene purification stage showing three distillation units (numbered) with the desired product streams, benzene and chlorobenzene, and one waste stream, ``Others''.

To assess the different alternatives, the economic criteria of capital and operating costs are used. Process models are required to determine the impact of design decisions on these criteria. For this problem, the process models, in Jacaranda, are based on the Fenske, Underwood, and Gilliland short-cut models and correlations often used for multi-component distillation column design [Rathore et al. 1974; Monroy-Loperena and Vacahern 2012].

These models are based on the concept of light (most volatile component in the separation) and heavy (least volatile of the components) key species. Essentially, if the species in a mixture are sorted according to their boiling points, the mixture can be separated between any two adjacent species. For instance, if there were a mixture with three species, A, B, and C, with increasing boiling points from A to C, there would be two possible separations for this mixture. The first would be between A and B, yielding two streams, one with almost all of the A but a little B and one with a little of the A, most of the B, and essentially all of the C. The second separation possible would be between B and C: one of the resulting streams from this separation would have all the A, most of the B and a little C and the other stream would have a little B and most of the C.

The Fenske equation determines the minimum number of stages, \(N_{\min}\), a distillation column will require to achieve a given separation identified by the recovery of the light and the heavy:

\[ N_{\min} = \frac {\log \frac {x_{D,l}x_{B,h}} {x_{B,l}x_{D,h}}} {\log \alpha_{l,h}} \]

where \(D\) refers to the distillate or tops product of the distillation unit and \(B\) to the bottoms product. \(x\) is the molar fraction of each species in the streams, \(\alpha_{l,h}\) is the relative volatility of the light key to the heavy key. Volatility is a measure of the vapour pressure, a property of each species, with higher values of volatility associated with the lighter species. The relative volatility of a pair of species is the ratio of their volatilities.

The compositions of the light and heavy keys in the product streams are a function of the recovery design variable. The recovery specifies the fraction of the key species that ends up in the desired output stream. The higher the recovery, the smaller the amount of each key that goes out with the other stream. Higher recovery is required for higher purity of the final product streams but there is direct relationship between the recovery and the size of the distillation column and hence its cost, both capital and operating.

The Underwood equation determines the minimum amount of reflux, the liquid sent back into the column from the top stream to aid in achieving the separation:

\[ R_{\min} = \sum_{i=1}^{n_{c}} \frac {\alpha_{i} x_{D,i}} {\alpha_{i}-\theta} \]

where \(\theta\) is the solution to the nonlinear equation

\[ \sum_{i=1}^{n_{c}} \frac {\alpha_{i}x_{F,i}} {\alpha_{i} - \theta} = 1 - q\]

where \(F\) indicates the feed stream, \(n_{c}\) is the number of species involved in the feed stream and \(q\) is the state of the feed stream. The state depends on the energy in the stream and that is a function of the temperature and the pressure of the stream.

The actual number of stages, above the minimum \(N_{\min}\), and the actual reflux, also above the minimum \(R_{\min}\), are correlated through the Gilliland equation. This is also a nonlinear equation and depends on another design variable, the reflux rate factor. The higher the factor, the larger the actual reflux rate used. The reflux rate affects the operating costs through the use of cooling and heating utilities: larger reflux means increased utility use. It also influences the capital cost: higher reflux leads to a wider column but one with less stages, usually leading to a lower capital cost overall.

In all of the above equations, the calculation of variables such as \(\alpha\) and \(q\) require the estimation of physical properties. Physical property models are typically nonlinear. For this problem, we have used the Antoine equation to determine vapour pressures, \(p^{*}\) used to calculate the relative volatilities, \(\alpha\), of the species:

\[ \log p^{*} = A - \frac {B} {T - C} \]

where A, B and C are the Antoine coefficients which can be found in reference books for many species. The \(log\) function used will depend on the source of the Antoine coefficients and may be base 10 or natural.

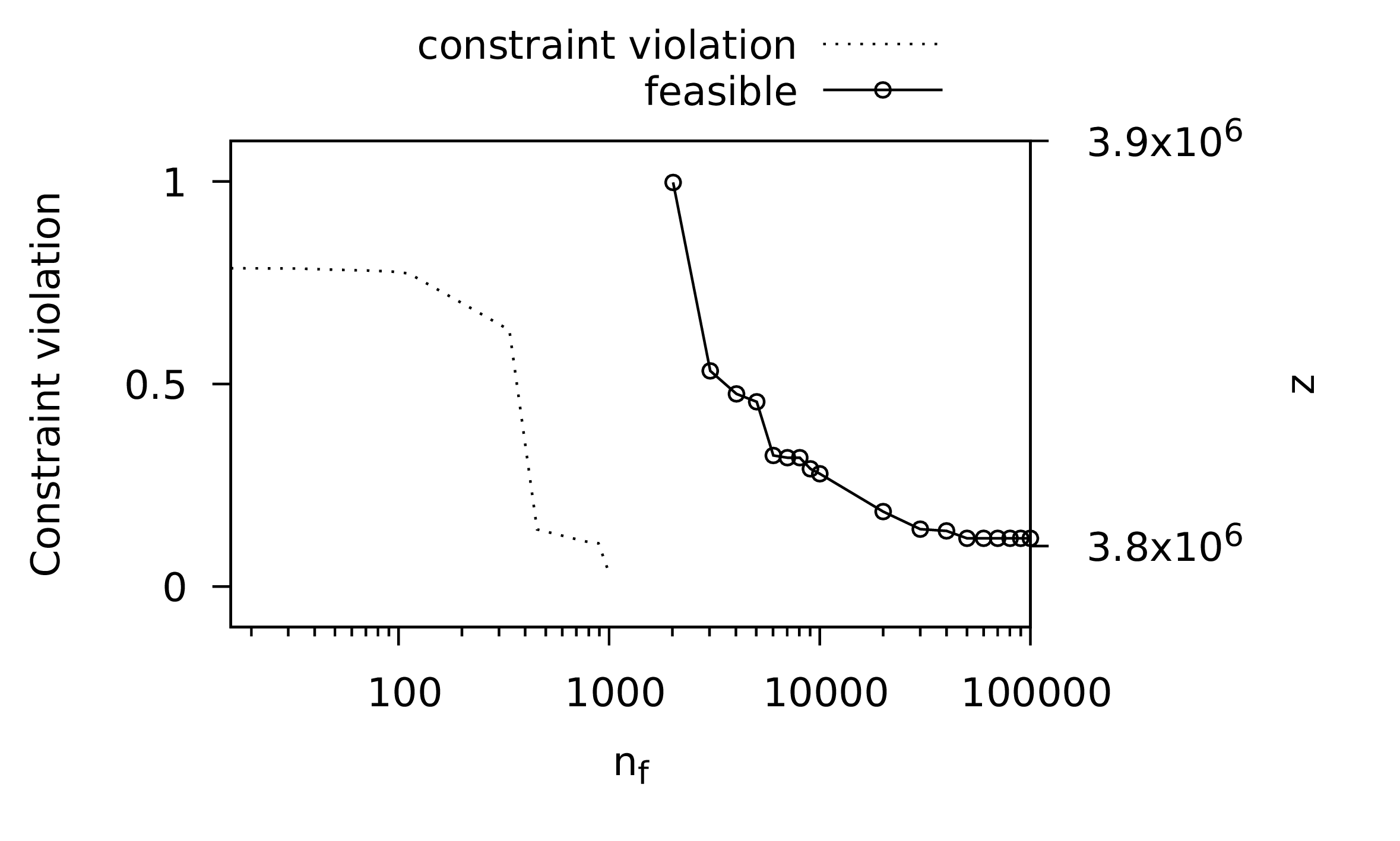

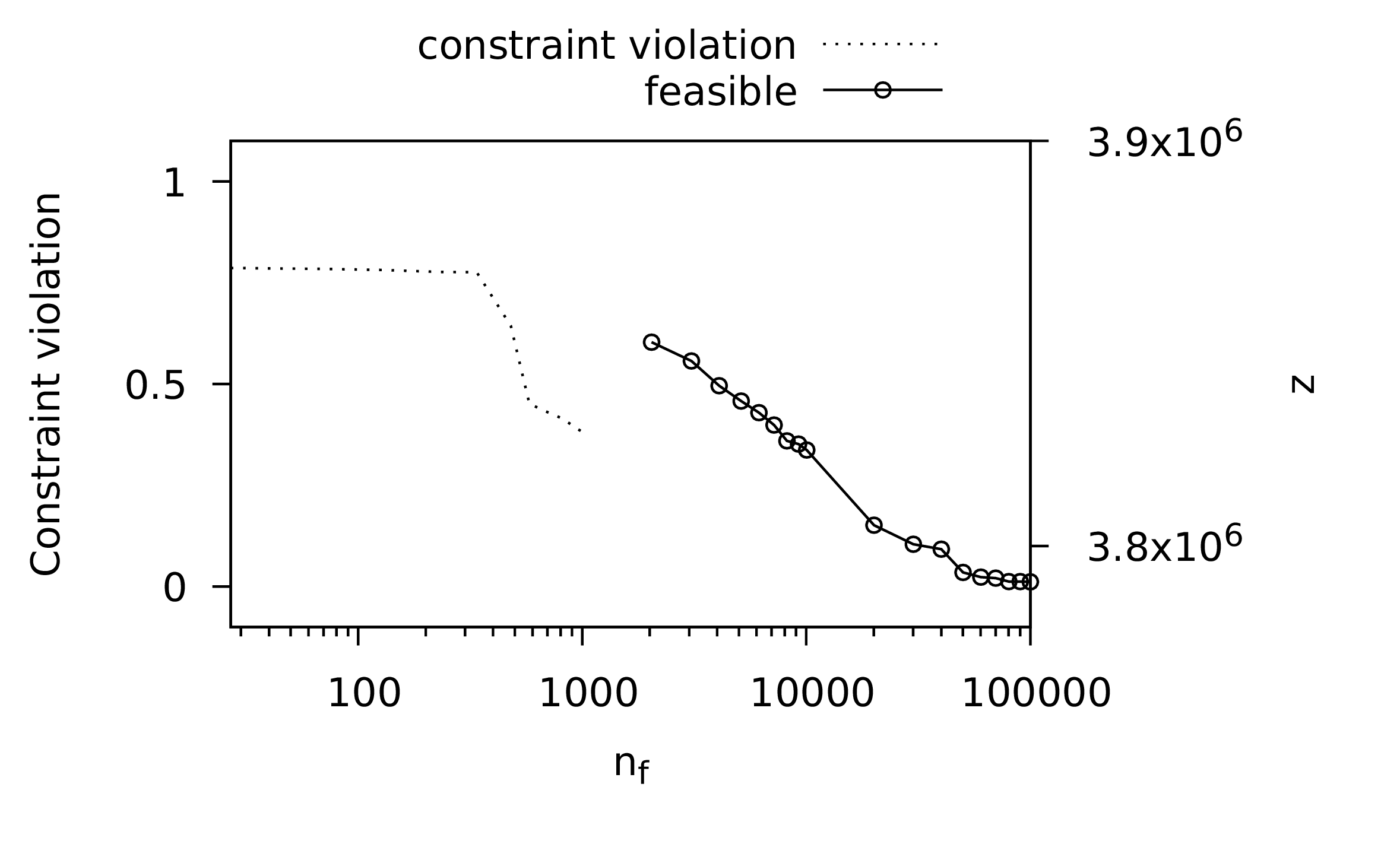

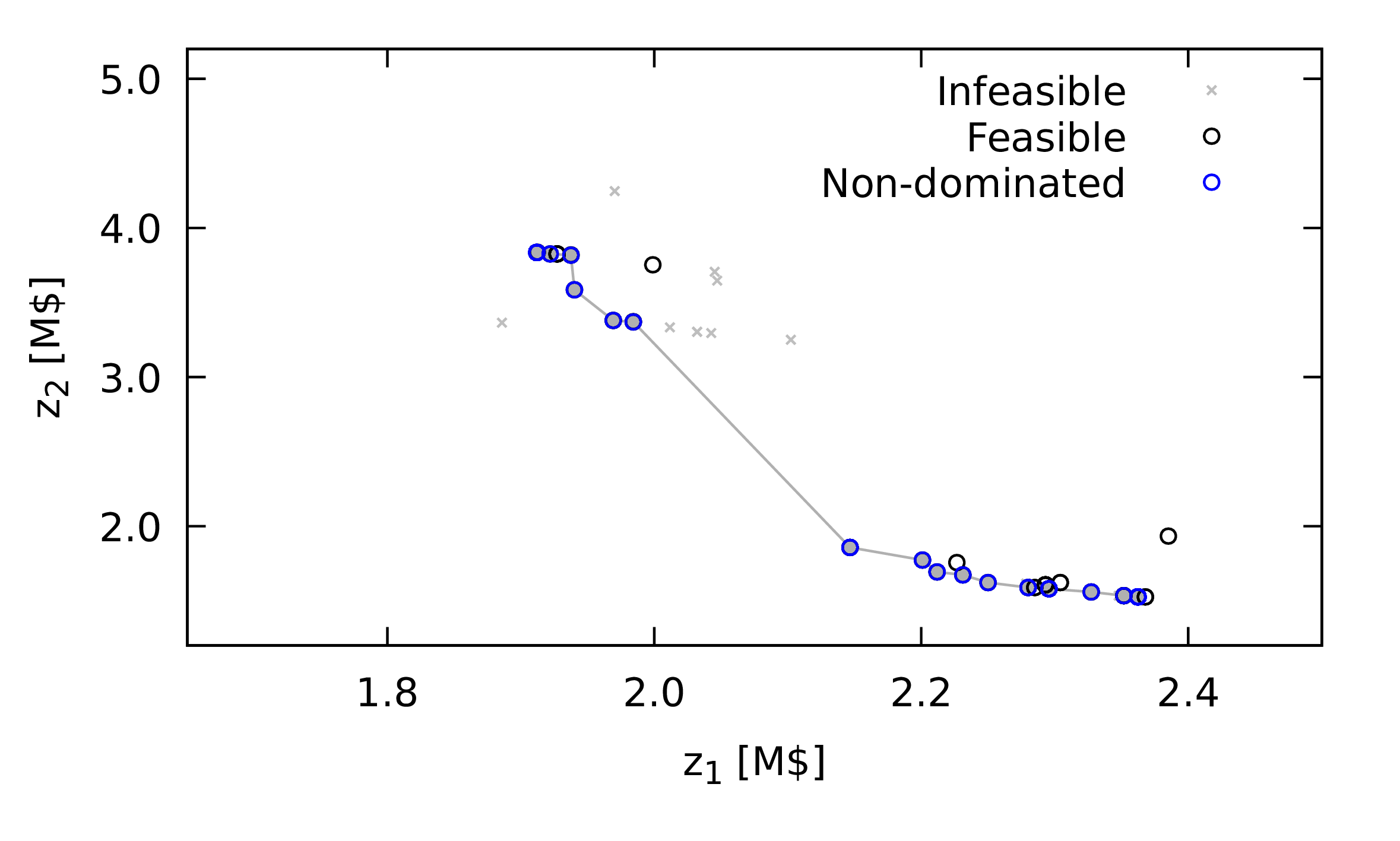

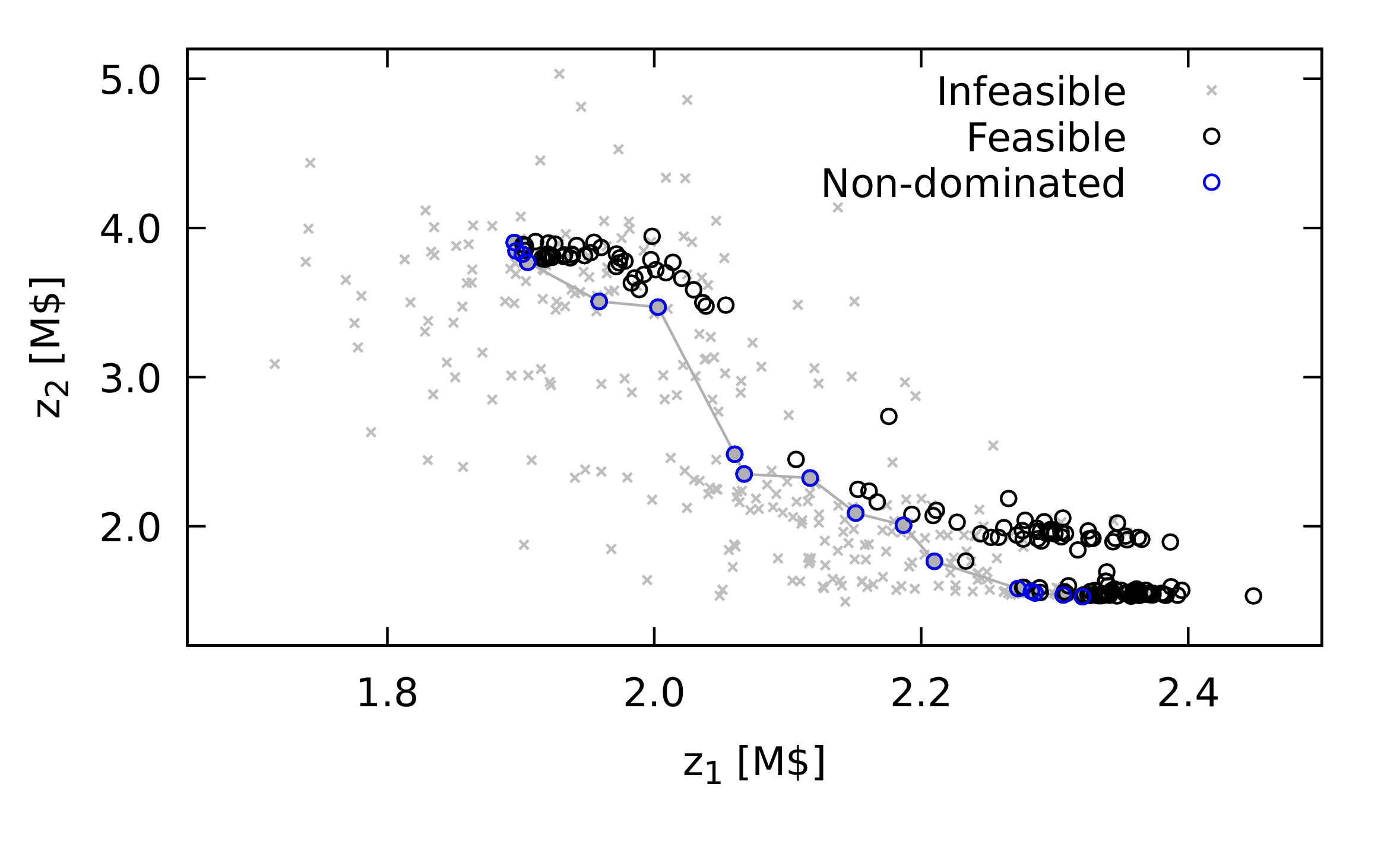

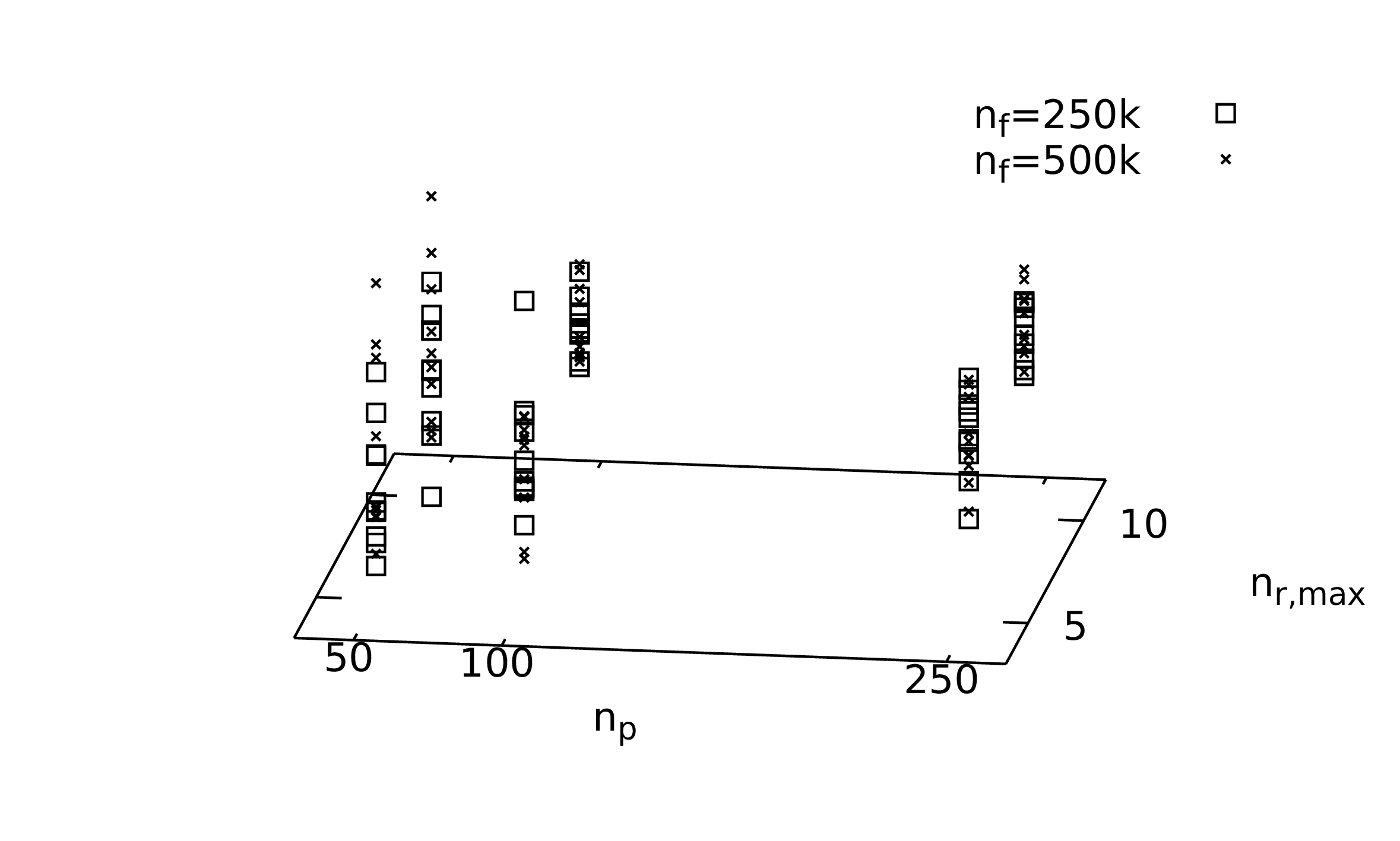

The relative volatilities of the species are the ratio of their vapour pressure to one reference species in the mixture. The temperatures will depend on the operating pressure, the third design variable for the distillation unit. Higher pressures will results in higher temperatures and smaller relative volatilities. The former will affect the choice of heating and cooling utilities; the latter will affect the number of stages required for the separation required.