UCL Division of Psychology and Language Sciences

Book your place here

Book your place here

View our Master's programmes

UCL PALS has a strong commitment to sustainability. More here.

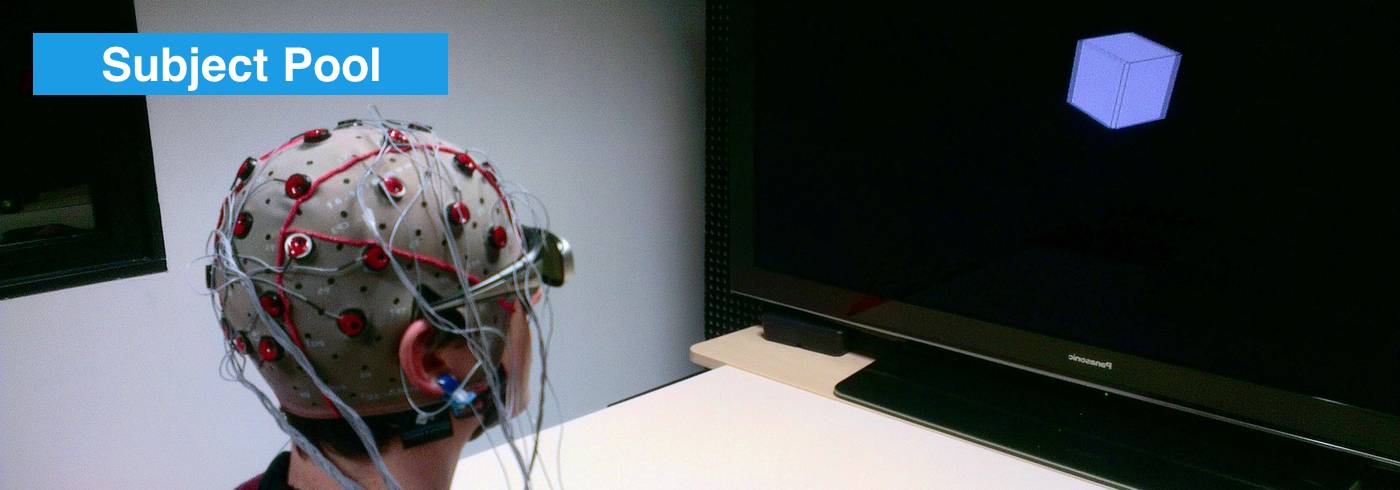

PALS has a 'Subject Pool' platform where researchers can recruit and people can participate in studies. More here.

Close

Close