Recent news

- 01/11/2017: Sound Talking

"Sound Talking" is a one-day event at the London Science Museum that seeks to explore the complex relationships between language and sound, both historically and in the present day. It aims to identify the perspectives and methodologies of current research in the ever-widening field of sound studies, and to locate productive interactions between disciplines.

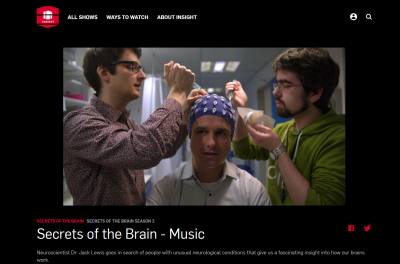

- 11/10/2017: "Secrets of the Brain"

- 28/09/2017: in2science lab image award

Many congratulations to Mivic - our in2science summer student, who won first prize in the lab photo competition at London Deepmind

- 15/09/2017: 5 generations at the International Conference on Auditory Cortex

The international Conference on Auditory Cortex was an unforgettable experience in all respects! We are grateful for travel awards to Alice Milne, Kate Molloy, Sijia Zhao and Maria (as invited speaker).

Our family Tree: "Great Great Grandpa" John Rinzel, "Great Grandpa" Shihab Shamma, "Grandpa" Jonathan Simon, "Mom" Maria Chait, "Dad" Fred Dick and representatives of the current generation: Sijia Zhao and Kate Molloy.

- 17/08/2017: Our in2science students are here!

Our in2science students, Stefania and Mivic are here! And who best to practice EEG setup on than the lab PI??

- 29/07/2017: All the best, Ulrich!

Saying Goodbye to Ulrich who is moving back to Austria to take on a position at the university of Vienna.

We forgot to take photos at his farewell dinner, but here is a photo of his 'lab mug' :-)

- 27/07/2017: Our work covered on BBC's 'Science in Action'

Our work on the effect of gaze on listeners' sensitivity to sounds is featured on BBC World Service's 'Science in Action' Listen: here (from 8':40)

- 05/07/2017: Gaze direction affects sensitivity to sounds

Ulrich's new paper is out.

UCL news link

Unfortunately, the daily mail have completely misinterpreted our findings.

- 15/06/2017: Congrats Mathilde

...for a successful upgrade to 'PhD candidate'!

- 31/03/2017: Farewell Ed!

Saying goodbye to Ed. After 4 years at the Ear Institute, he is moving to work with Matt Davis at the MRC CBU

- 08/03/2017: Congrats Sijia

Sijia’s poster was awarded a runner-up prize in the Brain Science & Life Sciences section of the Doctoral School Poster Day.

- 15/02/2017: Busy at ARO!

Daniel Bates presenting his poster: "Attentive tracking of auditory streams in the presence of static or dynamic distractors"

Kate Molloy presenting her poster: "Auditory figure-ground segregation can be impaired by high visual load"

Mathilde Le Gal de Kerangal at her poster "The effect of distraction by brief transients on auditory change detection."

Rosy Southwell presenting her poster: "Sequence predictability is associated with enhanced error responses in humans"

Ulrich Pomper presenting his work on "The impact of visual gaze direction on human auditory scene analysis"

Sijia Zhao presenting her poster "Sensitivity to Non-Deterministic Pattern Change in Rapid, Stochastic Tone Sequences"

- 20/01/2017: Secrets of the Brain - filming

The crew from Lambent Productions were filming in the lab today. The footage will be part of the doc series 'Secrets of the Brain'.

In the photo are Ulrich Pomper (post Doc) and Robert Jagiello (MSc student) prepping presenter Jack Lewis, for an EEG experiment.

- 20/01/2017: upcoming talks and presentations

24/01/2017 Maria Chait "How the brain detects patterns in sound sequences" Neuroscience Center, University of Geneva, Switzerland.

02/02/2017 Maria Chait "The auditory system as the brain’s ‘early warning’ system – Behavioural, eye tracking, and functional brain imaging investigations in humans". Eaton Peabody Laboratories, Massachusetts Eye and Ear Infirmary, Boston, USA.

27/02/2017 Maria Chait "How the brain detects patterns in sound sequences". Collège de France, Paris, France

- 18/01/2017: 'Action on Hearing Loss' Research Day

Mathilde's PhD research - on the effects of aging and hearing loss on auditory scene analysis - is funded by Action on Hearing Loss (AoHL) .

Here she is talking about her work at AoHL's Research day.

- 02/01/2017: Theme issue ‘Auditory and visual scene analysis’

A new paper from our lab is part of a special issue of Philosophical Transactions of the Royal Society B devoted to Auditory and Visual Scene analysis

- 01/01/2017: Happy New Year

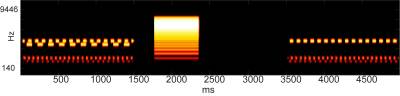

As the web is being filled up with sunset/sunrise photos marking the dawn of a new year, here is our contribution - A spectrogram from one of our stimuli.

Close

Close